Of course! "Python odbchelper" is a common search query for anyone trying to connect Python applications to databases using the ODBC (Open Database Connectivity) standard.

Let's break this down into a comprehensive guide.

What is ODBC and Why Use It?

ODBC is a standard API for accessing database management systems (DBMS). Think of it as a universal translator that allows your Python code to "speak" to many different types of databases (like SQL Server, Oracle, MySQL, PostgreSQL, etc.) without needing to know the specific language of each database.

Key Reasons to Use ODBC in Python:

- Database Agnostic: Write your database connection logic once, and it can potentially work with any database that has an ODBC driver.

- Enterprise Standard: ODBC is a well-established standard in many large corporations, especially for connecting to legacy systems or enterprise databases like Microsoft SQL Server and Oracle.

- Access to Advanced Features: ODBC drivers can expose database-specific features and functionalities that simpler libraries might not.

The Two Main Approaches in Python

There are two primary ways to use ODBC in Python. The modern, recommended approach is much simpler.

Approach 1: The Modern & Recommended Way (pyodbc)

pyodbc is the most popular, robust, and feature-rich ODBC library for Python. It's actively maintained and works on Windows, macOS, and Linux.

Installation:

First, you need to install the pyodbc library.

pip install pyodbc

Prerequisites: You also need the ODBC Driver for your specific database.

- SQL Server: Microsoft ODBC Driver for SQL Server

- PostgreSQL: PostgreSQL ODBC Driver

- MySQL: MySQL Connector/ODBC

- Oracle: Oracle ODBC Driver

- SQLite: Comes pre-installed with Windows. On macOS/Linux, you might need to install it (e.g.,

unixODBC).

Approach 2: The Legacy Way (odbc)

Python has a built-in odbc module, but it's highly discouraged for new projects. It was an early attempt at an ODBC interface and is difficult to use, poorly documented, and lacks the features and community support of pyodbc.

⚠️ Warning: Avoid using import odbc. Stick with pyodbc.

Practical Guide with pyodbc

Let's walk through the most common tasks using pyodbc.

Step 1: Connecting to a Database

The connection string is the most critical part. Its format depends on your database and driver. The general structure is:

DRIVER={...};SERVER=...;DATABASE=...;UID=...;PWD=...

Example: Connecting to Microsoft SQL Server

import pyodbc

# --- Connection String ---

# You need to find the exact name of your driver from your ODBC Data Source Administrator

# On Windows: Search for "ODBC Data Sources" (64-bit) and look under the "Drivers" tab.

# Common SQL Server driver names:

# - ODBC Driver 18 for SQL Server

# - ODBC Driver 17 for SQL Server

# - SQL Server

connection_string = (

"DRIVER={ODBC Driver 18 for SQL Server};"

"SERVER=your_server_name;" # e.g., "localhost", "my-server.database.windows.net"

"DATABASE=your_database_name;"

"UID=your_username;"

"PWD=your_password;"

)

try:

# Establish the connection

conn = pyodbc.connect(connection_string)

print("Connection successful!")

# Create a cursor object to execute queries

cursor = conn.cursor()

# --- Your database operations go here ---

# Example: Execute a simple query

cursor.execute("SELECT @@VERSION AS SQL_Version")

# Fetch the result

row = cursor.fetchone()

if row:

print(f"SQL Server Version: {row.SQL_Version}")

except pyodbc.Error as e:

print(f"Error connecting to the database: {e}")

finally:

# Ensure the connection is closed

if 'conn' in locals() and conn:

conn.close()

print("Connection closed.")

Step 2: Executing a Query and Fetching Results

You use the cursor object to run SQL commands.

# Assuming 'conn' and 'cursor' are already established from the previous example

try:

# Execute a query to get all users from a 'Users' table

cursor.execute("SELECT id, name, email FROM Users")

# --- Ways to fetch data ---

# 1. Fetch one row at a time

print("\n--- Fetching one by one ---")

while True:

row = cursor.fetchone()

if not row:

break

print(f"ID: {row.id}, Name: {row.name}, Email: {row.email}")

# 2. Fetch all rows at once (be careful with large datasets!)

cursor.execute("SELECT id, name, email FROM Users")

all_rows = cursor.fetchall()

print("\n--- Fetching all at once ---")

for row in all_rows:

print(f"ID: {row.id}, Name: {row.name}, Email: {row.email}")

# 3. Fetch many rows

cursor.execute("SELECT id, name, email FROM Users")

some_rows = cursor.fetchmany(5) # Fetch the first 5 rows

print("\n--- Fetching many (5) ---")

for row in some_rows:

print(f"ID: {row.id}, Name: {row.name}, Email: {row.email}")

except pyodbc.Error as e:

print(f"Error executing query: {e}")

Step 3: Using Parameters (Preventing SQL Injection)

Never use Python string formatting ( or f-strings) to insert variables into your SQL queries. This is a major security risk. Always use parameterized queries.

# --- SAFE WAY: Using Parameters ---

user_id_to_find = 101

search_term = "John"

try:

# Using a question mark as a placeholder

cursor.execute("SELECT * FROM Users WHERE id = ?", user_id_to_find)

user = cursor.fetchone()

if user:

print(f"\nFound user by ID: {user.name}")

# Using named placeholders

cursor.execute("SELECT * FROM Users WHERE name LIKE ? AND email LIKE ?",

f"%{search_term}%", f"%@example.com%")

users_found = cursor.fetchall()

print(f"\nFound {len(users_found)} users named like '{search_term}' with @example.com email.")

except pyodbc.Error as e:

print(f"Error with parameterized query: {e}")

Step 4: Inserting, Updating, and Deleting Data (DML)

For commands that modify data (INSERT, UPDATE, DELETE), you must call conn.commit() to save the changes to the database.

# --- Inserting Data ---

new_user_name = "Alice"

new_user_email = "alice@example.com"

try:

# Use parameters to prevent SQL injection

cursor.execute(

"INSERT INTO Users (name, email) VALUES (?, ?)",

new_user_name,

new_user_email

)

# Commit the transaction to make the change permanent

conn.commit()

print(f"\nSuccessfully inserted user: {new_user_name}")

except pyodbc.Error as e:

# If an error occurs, roll back any changes

conn.rollback()

print(f"Error inserting user: {e}")

# --- Updating Data ---

user_id_to_update = 101

new_email = "alice.new@example.com"

try:

cursor.execute(

"UPDATE Users SET email = ? WHERE id = ?",

new_email,

user_id_to_update

)

conn.commit()

print(f"\nSuccessfully updated email for user ID {user_id_to_update}")

except pyodbc.Error as e:

conn.rollback()

print(f"Error updating user: {e}")

Common pyodbc Features

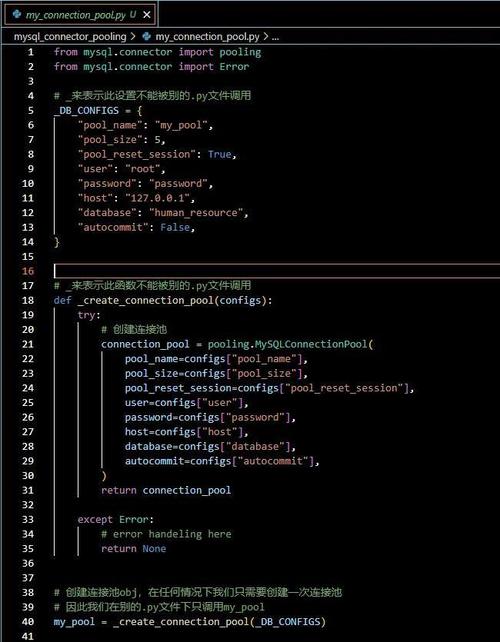

Connection Pooling

Reconnecting to the database for every request is slow. Connection pooling allows you to reuse existing connections.

import pyodbc

# Use 'pooling' in the connection string

connection_string = "DRIVER={...};SERVER=...;DATABASE=...;UID=...;PWD=...;Pooling=yes;Max Pool Size=10;"

# You can create multiple connections from the same string,

# and pyodbc will manage the pool for you.

conn1 = pyodbc.connect(connection_string)

conn2 = pyodbc.connect(connection_string)

# ... do work ...

conn1.close()

conn2.close()

Fetching as Dictionaries

By default, pyodbc returns rows as pyodbc.Row objects. You can easily get them as dictionaries.

# Set the connection attribute to return dictionary-like rows

conn = pyodbc.connect(connection_string, autocommit=True)

conn.add_output_handler(pyodbc.SQL_C_DEFAULT, dict) # Modern way

# OR for older pyodbc versions, use a cursor that returns dicts

cursor = conn.cursor()

cursor.execute("SELECT name, email FROM Users")

rows_as_dicts = [dict(row) for row in cursor]

print(rows_as_dicts)

# Output: [{'name': 'Alice', 'email': 'alice.new@example.com'}, ...]

pyodbc vs. Other Database Libraries (e.g., psycopg2, pymysql)

| Feature | pyodbc |

psycopg2 (PostgreSQL) |

pymysql (MySQL) |

|---|---|---|---|

| Purpose | Universal ODBC | Native PostgreSQL Driver | Native MySQL Driver |

| Pros | - Works with any database that has an ODBC driver. - Enterprise standard. - Mature and feature-rich. |

- Excellent performance and feature set for PostgreSQL. - Native integration, often feels "Pythonic". - Very popular for Postgres. |

- Simple and easy to use for MySQL. - Lightweight. |

| Cons | - Can be slightly more complex to set up (drivers, connection strings). - Abstraction layer can have a small performance overhead. |

- Only works with PostgreSQL. | - Only works with MySQL. |

| When to Use | When you need to connect to multiple database types, use an enterprise database (SQL Server, Oracle), or are required to use ODBC. | When you are exclusively working with PostgreSQL. | When you are exclusively working with MySQL. |

Summary

For "Python odbchelper", the definitive answer is use the pyodbc library.

- Install:

pip install pyodbc - Get Driver: Download and install the correct ODBC driver for your database.

- Connect: Build a connection string and use

pyodbc.connect(). - Cursor: Get a

cursorto execute SQL. - Query: Use

cursor.execute()with parameters for safety. - Fetch: Use

fetchone(),fetchall(), orfetchmany(). - Commit: Call

conn.commit()afterINSERT,UPDATE, orDELETEoperations. - Close: Always close your connection in a

finallyblock.