Of course! The term "anchorlist" isn't a standard Python library, but it's a very descriptive name that suggests a few common programming tasks. I'll cover the most likely interpretations and provide solutions for each.

The most common scenarios for "anchorlist" are:

- A list of anchor tags (

<a>) from an HTML document. (Web Scraping) - A data structure that holds multiple "anchor" points. (General Programming)

- A custom class to manage a list of anchor objects. (Object-Oriented Programming)

Let's break down each scenario with clear examples.

Scenario 1: Extracting a List of Anchor Tags from HTML (Web Scraping)

This is the most frequent use case. You have an HTML string or a webpage, and you want to find all the links (<a> tags) and get their href (URL) and text.

We'll use the popular BeautifulSoup library, which is perfect for this.

Step 1: Install BeautifulSoup

If you don't have it installed, open your terminal or command prompt and run:

pip install beautifulsoup4 # You also need a parser, lxml is a fast and popular choice pip install lxml

Step 2: Python Code to Find Anchors

Here’s how you can parse HTML and extract a list of anchor information.

from bs4 import BeautifulSoup

# A sample HTML string. This could also be the content of a webpage.

html_doc = """

<html>

<head>A Simple Page</title>

</head>

<body>

<h1>Welcome to the Page</h1>

<p>Here are some links:</p>

<ul>

<li><a href="https://python.org" class="main-link">Python Official Site</a></li>

<li><a href="/about-us">About Us</a></li>

<li><a href="https://google.com" id="search-link">Google</a></li>

<li><a>This is a link without an href attribute</a></li>

</ul>

<div>

<a href="https://github.com">GitHub</a>

</div>

</body>

</html>

"""

# 1. Create a BeautifulSoup object

# 'lxml' is the parser we installed. You could also use 'html.parser'.

soup = BeautifulSoup(html_doc, 'lxml')

# 2. Find all anchor tags

# soup.find_all('a') returns a list of all <a> tag objects

anchor_tags = soup.find_all('a')

print(f"Found {len(anchor_tags)} anchor tags.\n")

# 3. Process the list to get the desired information

# We'll create a list of dictionaries, which is a very common and useful format.

anchor_list = []

for tag in anchor_tags:

# Get the text content of the tag, stripping extra whitespace

text = tag.get_text(strip=True)

# Get the 'href' attribute. If it doesn't exist, get None.

href = tag.get('href') # Use .get() to avoid errors if 'href' is missing

# Only add to the list if it has a valid href

if href:

anchor_info = {

'text': text,

'href': href,

'title': tag.get('title', 'No title') # Get title or default

}

anchor_list.append(anchor_info)

# Print the final, clean list

import json

print(json.dumps(anchor_list, indent=2))

Output:

Found 5 anchor tags.

[

{

"text": "Python Official Site",

"href": "https://python.org",: "No title"

},

{

"text": "About Us",

"href": "/about-us",: "No title"

},

{

"text": "Google",

"href": "https://google.com",: "No title"

},

{

"text": "GitHub",

"href": "https://github.com",: "No title"

}

]

Scenario 2: A General "Anchor List" Data Structure

Sometimes, "anchorlist" might just refer to a list used to store key reference points or "anchors" in your data. For example, storing line numbers, IDs, or indices that are important for navigation or processing.

Here, "anchorlist" is just a well-named Python list.

Example: Staining Line Numbers in a File

Imagine you're parsing a log file and want to keep track of lines that contain errors.

# Sample log data

log_data = """

INFO: System started.

INFO: Loading user profiles.

ERROR: Failed to connect to database.

INFO: User 'admin' logged in.

WARNING: Disk space is low.

ERROR: Could not find config file.

"""

# An "anchorlist" to store the line numbers of errors

error_anchors = []

# Split the data into lines and enumerate to get the line number (starting from 1)

for line_number, line in enumerate(log_data.splitlines(), 1):

if "ERROR" in line:

error_anchors.append(line_number)

print(f"Found errors on lines: {error_anchors}")

# Now you can use this list to go back and process those specific lines

for anchor_line in error_anchors:

print(f"--- Processing error on line {anchor_line} ---")

# Here you would fetch the actual line from the source and handle it

Output:

Found errors on lines: [3, 6]

--- Processing error on line 3 ---

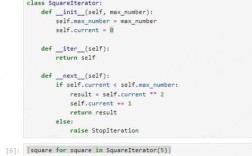

--- Processing error on line 6 ---Scenario 3: A Custom AnchorList Class (OOP Approach)

For more complex applications, you might want to create a dedicated class to manage your anchors. This provides better organization and functionality (methods) instead of just a raw list.

This is the most robust and "Pythonic" way to handle a collection of related objects.

Example: A Class to Manage a List of Links

class Anchor:

"""Represents a single HTML anchor link."""

def __init__(self, text, href, title=""):

self.text = text

self.href = href

self.title = title

def __repr__(self):

# A clean string representation for printing the object

return f"Anchor(text='{self.text}', href='{self.href}')"

class AnchorList:

"""A class to manage a collection of Anchor objects."""

def __init__(self):

# The core data is a private list

self._anchors = []

def add_anchor(self, text, href, title=""):

"""Adds a new anchor to the list."""

if href:

new_anchor = Anchor(text, href, title)

self._anchors.append(new_anchor)

print(f"Added: {new_anchor}")

else:

print(f"Skipped: '{text}' - No href provided.")

def get_all_links(self):

"""Returns a list of just the hrefs."""

return [anchor.href for anchor in self._anchors]

def find_by_text(self, text_to_find):

"""Finds and returns anchors whose text contains a substring."""

return [anchor for anchor in self._anchors if text_to_find.lower() in anchor.text.lower()]

def __len__(self):

"""Allows using len() on the AnchorList object."""

return len(self._anchors)

def __iter__(self):

"""Allows looping over the AnchorList object directly."""

return iter(self._anchors)

# --- How to use the custom class ---

my_links = AnchorList()

my_links.add_anchor("Python", "https://python.org")

my_links.add_anchor("Google", "https://google.com", title="A search engine")

my_links.add_anchor("Wikipedia", "https://wikipedia.org")

# This one will be skipped

my_links.add_anchor("Invalid Link", "")

print("\n--- All Links ---")

for link in my_links:

print(link)

print("\n--- Getting all URLs ---")

print(my_links.get_all_links())

print("\n--- Searching for 'Python' ---")

python_links = my_links.find_by_text("Python")

print(python_links)

print(f"\nTotal number of anchors: {len(my_links)}")

Output:

Added: Anchor(text='Python', href='https://python.org')

Added: Anchor(text='Google', href='https://google.com')

Added: Anchor(text='Wikipedia', href='https://wikipedia.org')

Skipped: 'Invalid Link' - No href provided.

--- All Links ---

Anchor(text='Python', href='https://python.org')

Anchor(text='Google', href='https://google.com')

Anchor(text='Wikipedia', href='https://wikipedia.org')

--- Getting all URLs ---

['https://python.org', 'https://google.com', 'https://wikipedia.org']

--- Searching for 'Python' ---

[Anchor(text='Python', href='https://python.org')]

Total number of anchors: 3Summary: Which one should you use?

| Scenario | Best Approach | Why? |

|---|---|---|

| Extracting links from HTML | BeautifulSoup | It's the standard, most efficient, and most robust tool for web scraping. It handles malformed HTML and provides a clean API. |

| Simple storage of reference points | A well-named Python list |

It's simple, direct, and requires no extra libraries. Perfect for quick scripts or when the logic is straightforward. |

| Managing a complex collection of anchors | A Custom Class (AnchorList) |

This is the most scalable and maintainable solution. It encapsulates the data and the logic that operates on it, making your code cleaner and easier to extend. |