Of course! "Python extracting" can mean many different things, as Python is a powerhouse for data manipulation. Let's break it down into the most common scenarios, from simple string operations to complex data extraction.

I'll structure this answer by use case, starting with the simplest and moving to the more advanced.

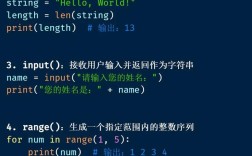

Extracting Substrings from a String

This is the most fundamental type of extraction. You have a piece of text and want to get a part of it.

a) Using Slicing (Most Common)

Python strings are sequences, so you can use slicing with square brackets [].

Syntax: string[start:stop:step]

start: The index to start at (inclusive). Defaults to 0.stop: The index to end at (exclusive).step: The stride (e.g., 2 for every other character). Defaults to 1.

Example:

text = "Hello, Python World!" # Extract the first 5 characters print(text[0:5]) # Output: Hello # Extract from character 7 to the end print(text[7:]) # Output: Python World! # Extract from the beginning up to (but not including) character 5 print(text[:5]) # Output: Hello # Extract the last 5 characters print(text[-5:]) # Output: rld! # Extract every second character print(text[::2]) # Output: HloPtoWr!

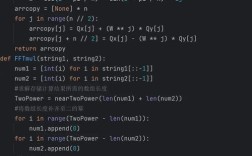

b) Using Regular Expressions (Regex) (Most Powerful)

For pattern-based extraction (e.g., extracting all email addresses, phone numbers, or numbers from a string), the re module is essential.

Example: Extracting all numbers from a string.

import re text = "My order number is 12345 and my invoice number is 67890." # findall() returns a list of all non-overlapping matches numbers = re.findall(r'\d+', text) # \d+ matches one or more digits print(numbers) # Output: ['12345', '67890'] # Example: Extracting words that start with 'P' words_starting_with_p = re.findall(r'\bP\w+', text) print(words_starting_with_p) # Output: ['Python']

Extracting Data from Files

Python makes it easy to read and parse structured files.

a) Extracting from a CSV (Comma-Separated Values) File

The built-in csv module is perfect for this.

Example: Imagine you have a file users.csv:

name,age,city Alice,30,New York Bob,25,Los Angeles Charlie,35,Chicago

Code to extract all names:

import csv

names = []

with open('users.csv', 'r') as file:

csv_reader = csv.reader(file)

next(csv_reader) # Skip the header row

for row in csv_reader:

names.append(row[0]) # Extract the first element (name)

print(names)

# Output: ['Alice', 'Bob', 'Charlie']

b) Extracting from a JSON (JavaScript Object Notation) File

JSON is extremely common for APIs and configuration files. The json module handles this natively.

Example: Imagine you have a file data.json:

{

"employees": [

{

"name": "David",

"department": "Engineering",

"skills": ["Python", "Java"]

},

{

"name": "Eve",

"department": "Marketing",

"skills": ["SEO", "Content"]

}

]

}

Code to extract employee names:

import json

with open('data.json', 'r') as file:

data = json.load(file) # Load the JSON data into a Python dictionary

employee_names = []

for employee in data['employees']:

employee_names.append(employee['name'])

print(employee_names)

# Output: ['David', 'Eve']

c) Extracting from an XML File

The xml.etree.ElementTree module is the standard library for parsing XML.

Example: Imagine you have a file books.xml:

<catalog>

<book id="bk101">

<author>Gambardella, Matthew</author>

<title>XML Developer's Guide</title>

</book>

<book id="bk102">

<author>Ralls, Kim</author>

<title>Midnight Rain</title>

</book>

</catalog>

Code to extract all book titles:

import xml.etree.ElementTree as ET

tree = ET.parse('books.xml')

root = tree.getroot()

s = []

for book in root.findall('book'): # Find all 'book' elementselement = book.find('title') # Find the 'title' element within each books.append(title_element.text) # Get the text content of the title

print(titles)

# Output: ["XML Developer's Guide", 'Midnight Rain']

Extracting Data from the Web (Web Scraping)

This involves fetching a web page and extracting information from its HTML content. The requests and BeautifulSoup libraries are the industry standard.

Step 1: Install the libraries

pip install requests beautifulsoup4

Step 2: Write the Python script Let's say we want to extract all the headlines from a news website's homepage.

import requests

from bs4 import BeautifulSoup

# URL of the page to scrape

url = 'http://quotes.toscrape.com/' # A simple site for scraping practice

try:

# 1. Fetch the HTML content of the page

response = requests.get(url)

response.raise_for_status() # Raise an exception for bad status codes (4xx or 5xx)

# 2. Parse the HTML with BeautifulSoup

soup = BeautifulSoup(response.text, 'html.parser')

# 3. Extract the desired data

# Find all the <div> elements with the class 'quote'

quotes = soup.find_all('div', class_='quote')

extracted_data = []

for quote in quotes:

# Find the <span> with class 'text' for the quote text

text = quote.find('span', class_='text').get_text(strip=True)

# Find the <small> with class 'author' for the author

author = quote.find('small', class_='author').get_text(strip=True)

extracted_data.append({'text': text, 'author': author})

# Print the extracted data

for item in extracted_data:

print(f'"{item["text"]}" - {item["author"]}')

except requests.exceptions.RequestException as e:

print(f"Error fetching the URL: {e}")

Extracting Data from PDFs

Extracting text from PDFs can be tricky because PDFs are a presentation format, not a text format. The PyMuPDF (fitz) library is known for its speed and accuracy.

Step 1: Install the library

pip install PyMuPDF

Step 2: Write the Python script

import fitz # PyMuPDF

pdf_path = 'example.pdf'

try:

# Open the PDF file

doc = fitz.open(pdf_path)

extracted_text = ""

# Iterate through each page

for page_num in range(len(doc)):

page = doc.load_page(page_num)

# Extract text from the page

text = page.get_text()

extracted_text += text + "\n"

# Print the extracted text

print(extracted_text)

# You can now process this text string as you wish (e.g., with regex)

# For example, find all dates in the format MM/DD/YYYY

import re

dates = re.findall(r'\b\d{1,2}/\d{1,2}/\d{4}\b', extracted_text)

print("\nFound dates:", dates)

except Exception as e:

print(f"An error occurred: {e}")

finally:

# Always close the document

if 'doc' in locals():

doc.close()

Summary: Which Tool to Use?

| Your Goal | Recommended Tool(s) | Why? |

|---|---|---|

| Get a fixed part of a string (e.g., first 5 chars) | String Slicing (my_string[0:5]) |

Simple, fast, and built into Python. |

| Find patterns in text (e.g., emails, phone numbers) | Regular Expressions (re module) |

The most powerful and flexible tool for pattern matching. |

| Work with CSV files | csv module |

Built-in, easy to use, and handles edge cases like quoted commas. |

| Work with JSON files or API responses | json module |

Built-in and directly maps JSON to Python dictionaries/lists. |

| Parse XML files | xml.etree.ElementTree |

Built-in and part of the standard library. |

| Scrape data from websites | requests + BeautifulSoup |

The de-facto standard. requests gets the page, BeautifulSoup parses it. |

| Extract text from PDFs | PyMuPDF (fitz) or pdfplumber |

Specialized libraries designed to handle the complexities of PDFs. |