Of course! Here's a comprehensive guide to using PyTorch's fasterrcnn_resnet50_fpn for object detection. We'll cover what it is, how it works, and walk through a complete, practical code example for training and inference.

What is Faster R-CNN?

Faster R-CNN is a state-of-the-art, two-stage object detection model. It's famous for its high accuracy, though it can be slower than single-stage models like YOLO or SSD.

The "Two-Stage" Process:

-

Stage 1: Region Proposal Network (RPN)

- The RPN scans the entire feature map (generated by a backbone network like ResNet) and proposes candidate bounding boxes (called "Region Proposals" or "anchors") that are likely to contain an object.

- It outputs a set of boxes and an "objectness" score for each box (how likely it is to be an object vs. background).

-

Stage 2: Detection Head (RoI Pooling & Classification/Regression)

(图片来源网络,侵删)

(图片来源网络,侵删)- The proposed regions from the RPN are then used to extract fixed-size feature maps from the backbone's output. This is done using RoI Pooling (or the more modern RoI Align).

- A second, smaller network takes these features and performs two tasks:

- Classification: Determines which class of object (e.g., "person", "car", "dog") is inside the proposed box.

- Bounding Box Regression: Refines the coordinates of the proposed box to make it a tight fit around the object.

Key Components Explained

torchvision.models.detection.fasterrcnn_resnet50_fpn: This is the specific implementation we'll use from thetorchvisionlibrary.fasterrcnn: The Faster R-CNN architecture.resnet50: The backbone network. ResNet-50 is a powerful convolutional neural network that extracts features from the input image.fpn: Feature Pyramid Network. This is a crucial addition. It creates a pyramid of feature maps at different scales, allowing the model to detect objects of various sizes more effectively.

- COCO Pre-trained Model: The model you get from

torchvisionis pre-trained on the massive COCO dataset, which contains 80 common object classes. This means it can already detect these classes out of the box. - VOC Dataset: For our example, we'll use the popular [Pascal VOC dataset](http host://host.host/pascal-voc/), which has 20 classes. This is a great way to learn how to fine-tune a model on your own custom data.

Practical Code Example: Fine-tuning on Pascal VOC

We will perform the following steps:

- Setup: Install libraries and download the Pascal VOC dataset.

- Prepare Data: Create a custom PyTorch

Datasetto load VOC images and annotations. - Train the Model: Fine-tune the pre-trained Faster R-CNN on our VOC data.

- Inference: Use the trained model to detect objects in a new image.

Step 0: Setup

First, make sure you have PyTorch and Torchvision installed.

pip install torch torchvision

Step 1: Download Pascal VOC Dataset

We'll use the 2007 trainval split for simplicity.

# Create a directory for the data mkdir -p data/voc # Download the dataset (VOCtrainval_06-Nov-2007.tar) # You can find it here: http host://host.host/VOCdevkit/ # Or use a direct link (this might change) wget http host://host.host/VOCdevkit/VOCtrainval_06-Nov-2007.tar tar -xvf VOCtrainval_06-Nov-2007.tar mv VOCdevkit data/voc/

Step 2: Prepare the Dataset

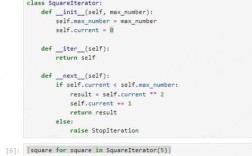

We need to create a PyTorch Dataset class that can load the VOC XML annotations and convert them into the format Faster R-CNN expects: a list of dictionaries, where each dictionary contains boxes, labels, and image_id.

import torch

import torchvision

from torchvision import transforms

from torch.utils.data import Dataset, DataLoader

from PIL import Image

import xml.etree.ElementTree as ET

import os

import glob

# VOC Class names (20 classes)

VOC_CLASSES = [

'aeroplane', 'bicycle', 'bird', 'boat', 'bottle', 'bus', 'car', 'cat',

'chair', 'cow', 'diningtable', 'dog', 'horse', 'motorbike', 'person',

'pottedplant', 'sheep', 'sofa', 'train', 'tvmonitor'

]

# Map class names to IDs

VOC_CLASS_TO_ID = {cls: i + 1 for i, cls in enumerate(VOC_CLASSES)} # +1 because 0 is background

VOC_ID_TO_CLASS = {i + 1: cls for i, cls in enumerate(VOC_CLASSES)}

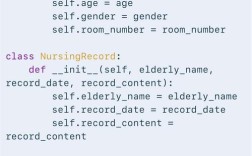

class VOCDetection(Dataset):

def __init__(self, root, year, image_set, transforms=None):

self.root = os.path.join(root, f"VOC{year}")

self.image_set = image_set

self.transforms = transforms

# Paths to annotation files

self.annotations = glob.glob(os.path.join(self.root, "Annotations", "*.xml"))

self.images = glob.glob(os.path.join(self.root, "JPEGImages", "*.jpg"))

def __getitem__(self, idx):

# Load image and annotation

img_path = self.images[idx]

ann_path = os.path.join(self.root, "Annotations", os.path.basename(img_path).replace(".jpg", ".xml"))

image = Image.open(img_path).convert("RGB")

# Parse XML annotation

tree = ET.parse(ann_path)

root = tree.getroot()

boxes = []

labels = []

for obj in root.findall('object'):

label = VOC_CLASS_TO_ID[obj.find('name').text]

labels.append(label)

bndbox = obj.find('bndbox')

xmin = float(bndbox.find('xmin').text)

ymin = float(bndbox.find('ymin').text)

xmax = float(bndbox.find('xmax').text)

ymax = float(bndbox.find('ymax').text)

boxes.append([xmin, ymin, xmax, ymax])

# Convert to tensors

boxes = torch.as_tensor(boxes, dtype=torch.float32)

labels = torch.as_tensor(labels, dtype=torch.int64)

# Create target dictionary

target = {}

target["boxes"] = boxes

target["labels"] = labels

target["image_id"] = torch.tensor([idx])

if self.transforms:

image = self.transforms(image)

return image, target

def __len__(self):

return len(self.images)

# Define transforms

# For training, we can add data augmentation like RandomHorizontalFlip

transform = transforms.Compose([

transforms.ToTensor(),

])

# Create dataset and dataloader

# Note: VOC uses 2007 trainval for training and 2007 test for testing

# For this example, we'll just use part of the 2007 trainval

dataset = VOCDetection(root='data/voc', year='2007', image_set='trainval', transforms=transform)

# For demonstration, let's use a subset of the data

# In a real scenario, you would use the full dataset

dataset = torch.utils.data.Subset(dataset, range(500))

# Use a custom collate_fn because our targets are dictionaries

def collate_fn(batch):

return tuple(zip(*batch))

dataloader = DataLoader(dataset, batch_size=2, shuffle=True, num_workers=4, collate_fn=collate_fn)

print(f"Dataset size: {len(dataset)}")

Step 3: Train the Model

This is the core part. We will:

- Load a pre-trained Faster R-CNN model.

- Replace the classifier head to match our 20 VOC classes.

- Define an optimizer and a learning rate scheduler.

- Loop through the data, perform forward and backward passes, and update the model's weights.

# --- Model Training ---

# 1. Load a pre-trained model

num_classes = len(VOC_CLASSES) + 1 # +1 for background class

model = torchvision.models.detection.fasterrcnn_resnet50_fpn(weights='DEFAULT')

# Get the number of input features for the classifier

in_features = model.roi_heads.box_predictor.cls_score.in_features

# 2. Replace the pre-trained head with a new one

model.roi_heads.box_predictor = torchvision.models.detection.faster_rcnn.FastRCNNPredictor(in_features, num_classes)

# Move model to the correct device

device = torch.device('cuda') if torch.cuda.is_available() else torch.device('cpu')

model.to(device)

# 3. Optimizer and Scheduler

params = [p for p in model.parameters() if p.requires_grad]

optimizer = torch.optim.SGD(params, lr=0.005, momentum=0.9, weight_decay=0.0005)

lr_scheduler = torch.optim.lr_scheduler.StepLR(optimizer, step_size=3, gamma=0.1)

# 4. Training loop

num_epochs = 10

for epoch in range(num_epochs):

model.train()

epoch_loss = 0

for i, (images, targets) in enumerate(dataloader):

images = list(image.to(device) for image in images)

targets = [{k: v.to(device) for k, v in t.items()} for t in targets]

# Forward pass

loss_dict = model(images, targets)

losses = sum(loss for loss in loss_dict.values())

# Backward pass and optimize

optimizer.zero_grad()

losses.backward()

optimizer.step()

epoch_loss += losses.item()

if i % 50 == 0:

print(f"Epoch [{epoch+1}/{num_epochs}], Step [{i}/{len(dataloader)}], Loss: {losses.item():.4f}")

# Update learning rate

lr_scheduler.step()

print(f"--- Epoch [{epoch+1}/{num_epochs}] completed. Average Loss: {epoch_loss/len(dataloader):.4f} ---")

# Save the trained model

torch.save(model.state_dict(), 'fasterrcnn_voc.pth')

print("Training complete and model saved.")

Step 4: Inference on a New Image

Now, let's use our trained model to detect objects in a new image.

# --- Inference ---

# Load the trained model

model.eval() # Set model to evaluation mode

model.load_state_dict(torch.load('fasterrcnn_voc.pth'))

model.to(device)

# Define a transform for inference (no augmentation)

inference_transform = transforms.Compose([

transforms.ToTensor()

])

# Load a new image (you can use any image)

# For this example, let's use one from the VOC test set

test_image_path = 'data/voc/VOCdevkit/VOC2007/JPEGImages/000001.jpg'

image = Image.open(test_image_path).convert("RGB")

image_tensor = inference_transform(image).to(device)

# Perform inference

with torch.no_grad():

predictions = model([image_tensor])

# Process the predictions

# The output is a list of dictionaries, one for each input image

pred = predictions[0]

# Filter out low-confidence predictions

score_threshold = 0.7

keep = pred['scores'] > score_threshold

boxes = pred['boxes'][keep].cpu().numpy()

labels = pred['labels'][keep].cpu().numpy()

scores = pred['scores'][keep].cpu().numpy()

# Visualize the results

import matplotlib.pyplot as plt

import matplotlib.patches as patches

fig, ax = plt.subplots(1, figsize=(12, 9))

ax.imshow(image)

for box, label, score in zip(boxes, labels, scores):

xmin, ymin, xmax, ymax = box

width = xmax - xmin

height = ymax - ymin

# Create a Rectangle patch

rect = patches.Rectangle((xmin, ymin), width, height, linewidth=2, edgecolor='r', facecolor='none')

ax.add_patch(rect)

# Add label and score text

label_name = VOC_ID_TO_CLASS[label]

ax.text(xmin, ymin - 10, f'{label_name}: {score:.2f}', color='white', fontsize=12, bbox=dict(facecolor='red', alpha=0.5))

plt.axis('off')"Faster R-CNN Predictions")

plt.show()

Key Parameters and Customization

weights: When loading the model, you can specify different pre-trained weights:'DEFAULT': COCO pre-trained.'FasterRCNN_ResNet50_FPN_Weights.DEFAULT': More explicit way (newer torchvision versions).None: A model with random weights.

num_classes: Always remember to change this to match your number of classes + 1 for the background.- Backbone: You can easily swap the backbone. For example, to use a MobileNetV3:

backbone = torchvision.models.mobilenet_v3_large(weights='MobileNet_V3_Large_Weights.DEFAULT').features # ... and then construct the Faster R-CNN with this backbone

- Inference Threshold: The

score_thresholdin the inference step is crucial. It filters out weak detections, reducing false positives. You can also adjustnms_thresh(Non-Maximum Suppression threshold) to control how overlapping boxes are handled.

When to Use Faster R-CNN vs. Other Models

| Feature | Faster R-CNN (Two-Stage) | YOLO / SSD (Single-Stage) |

|---|---|---|

| Accuracy | Higher, especially for small and medium objects. | Good, but generally lower than two-stage models. |

| Speed | Slower, due to the two-pass process. | Much Faster, ideal for real-time applications (e.g., video, self-driving cars). |

| Use Case | High-accuracy detection where speed is not the primary concern (e.g., medical imaging, satellite imagery, detailed analysis). | Real-time applications, object counting in videos, mobile applications. |

Conclusion: torchvision.models.detection.fasterrcnn_resnet50_fpn is an excellent, ready-to-use tool for high-accuracy object detection. Fine-tuning it on your own dataset is straightforward with PyTorch's ecosystem, making it a powerful choice for many computer vision projects.