Of course! The term "Python datasource" is broad, but it generally refers to any source of data that you can connect to, read from, or write to using Python. This can range from simple files on your local machine to massive, cloud-based databases.

Here’s a comprehensive breakdown of Python datasources, categorized from simple to complex.

File-Based Datasources

These are the most common and fundamental datasources. Python's standard library makes them incredibly easy to work with.

Text Files (.txt, .csv, .json, etc.)

The built-in open() function is the gateway to all file operations.

-

CSV (Comma-Separated Values): Best for tabular data.

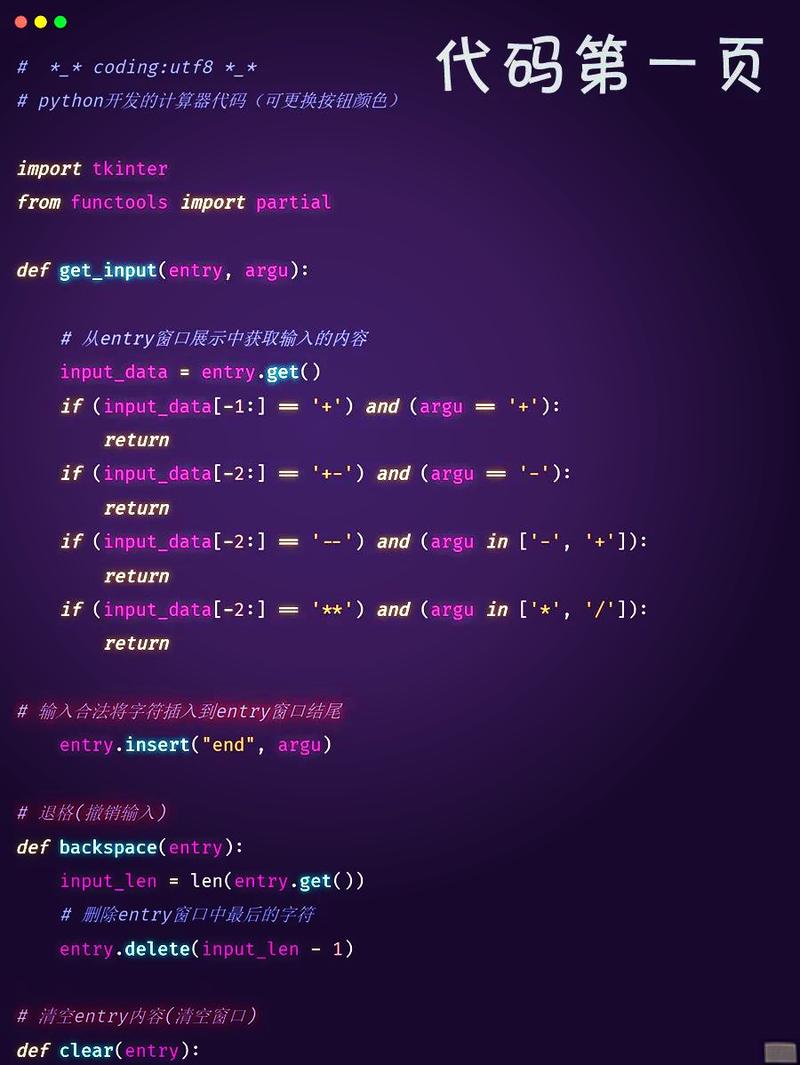

(图片来源网络,侵删)

(图片来源网络,侵删)- Manual Parsing: Using the

csvmodule.import csv

with open('data.csv', mode='r') as file: csv_reader = csv.DictReader(file) for row in csv_reader: print(f"Name: {row['name']}, Age: {row['age']}")

* **Pandas (Recommended):** The de-facto standard for data analysis in Python. ```python import pandas as pd df = pd.read_csv('data.csv') print(df.head()) - Manual Parsing: Using the

-

JSON (JavaScript Object Notation): Best for structured, nested data.

- Manual Parsing: Using the

jsonmodule.import json

with open('data.json', 'r') as file: data = json.load(file) for user in data['users']: print(user['username'])

* **Pandas:** Can also read JSON directly. ```python import pandas as pd df = pd.read_json('data.json') - Manual Parsing: Using the

-

Excel (

.xlsx,.xls): Requires an external library likeopenpyxlorxlrd. (图片来源网络,侵删)

(图片来源网络,侵删)- Pandas (Recommended):

import pandas as pd

Read from a specific sheet

df = pd.read_excel('data.xlsx', sheet_name='Sheet1') print(df)

- Pandas (Recommended):

Other File Types

- Parquet / Feather: Modern, columnar storage formats that are highly efficient for large datasets. Use the

pyarroworfastparquetlibraries. - HDF5: A format for storing large amounts of numerical data. Use the

h5pylibrary.

Database Datasources

For structured data that needs to be queried, updated, and managed concurrently.

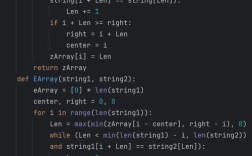

SQL Databases (Relational)

These require a database-specific driver (or a universal one like SQLAlchemy).

-

SQLite: A serverless, file-based database that comes with Python's standard library. Perfect for small projects and local development.

import sqlite3 # Connect to a database (it will be created if it doesn't exist) conn = sqlite3.connect('my_database.db') cursor = conn.cursor() # Create a table cursor.execute('''CREATE TABLE IF NOT EXISTS users (id INTEGER PRIMARY KEY, name TEXT, age INTEGER)''') # Insert data cursor.execute("INSERT INTO users (name, age) VALUES (?, ?)", ('Alice', 30)) conn.commit() # Query data cursor.execute("SELECT * FROM users") rows = cursor.fetchall() for row in rows: print(row) conn.close() -

PostgreSQL / MySQL / SQL Server: These require external drivers like

psycopg2(PostgreSQL),mysql-connector-python(MySQL), orpyodbc(SQL Server). -

SQLAlchemy (Highly Recommended): A powerful "Object-Relational Mapper" (ORM) that provides a consistent interface to many different SQL databases. It abstracts away the raw SQL.

from sqlalchemy import create_engine, MetaData, Table, select # Create an engine engine = create_engine('sqlite:///my_database.db') # Reflect the existing table metadata = MetaData() users = Table('users', metadata, autoload_with=engine) # Query using SQLAlchemy's expression language with engine.connect() as connection: stmt = select(users) result = connection.execute(stmt) for row in result: print(row)

NoSQL Databases

These are used for unstructured or semi-structured data.

-

MongoDB (Document Store): Stores data in JSON-like documents. Use the

pymongolibrary.from pymongo import MongoClient # Connect to MongoDB client = MongoClient('mongodb://localhost:27017/') db = client['mydatabase'] collection = db['users'] # Insert a document user_data = {"name": "Bob", "age": 25, "city": "New York"} collection.insert_one(user_data) # Query documents for user in collection.find({"age": {"$gt": 20}}): print(user) -

Redis (Key-Value Store): An in-memory data structure store. Use the

redislibrary.import redis r = redis.Redis(host='localhost', port=6379, db=0) r.set('language', 'Python') print(r.get('language')) # Output: b'Python'

Web & API Datasources

These datasources are accessed over the internet.

RESTful APIs

The requests library is the standard for making HTTP requests.

import requests

import json

# Make a GET request to a public API

response = requests.get('https://jsonplaceholder.typicode.com/posts/1')

# Check if the request was successful

if response.status_code == 200:

# Parse the JSON response

data = response.json()

print(f"Post Title: {data['title']}")

print(f"Post Body: {data['body']}")

else:

print(f"Error: {response.status_code}")

Web Scraping

When you need to extract data from websites that don't provide an API. BeautifulSoup is excellent for parsing HTML.

import requests

from bs4 import BeautifulSoup

url = 'http://quotes.toscrape.com/'

response = requests.get(url)

if response.status_code == 200:

soup = BeautifulSoup(response.text, 'html.parser')

# Find all quote elements

for quote in soup.find_all('div', class_='quote'):

text = quote.find('span', class_='text').text

author = quote.find('small', class_='author').text

print(f'"{text}" - {author}')

Big Data & Cloud Datasources

For handling datasets that are too large to fit in a single machine's memory.

Cloud Storage

-

Amazon S3: Use the

boto3library.import boto3 s3 = boto3.client('s3') # List objects in a bucket objects = s3.list_objects_v2(Bucket='my-bucket-name') for obj in objects['Contents']: print(obj['Key']) -

Google Cloud Storage: Use the

google-cloud-storagelibrary. -

Azure Blob Storage: Use the

azure-storage-bloblibrary.

Big Data Processing Frameworks

-

Apache Spark: The

pysparklibrary allows you to process massive datasets across a cluster.from pyspark.sql import SparkSession spark = SparkSession.builder.appName("Python Spark SQL basic example").getOrCreate() # Read a large CSV file from HDFS or S3 df = spark.read.csv("hdfs://path/to/large_data.csv", header=True, inferSchema=True) df.show()

In-Memory & Streaming Datasources

In-Memory Data Structures

Sometimes, your datasource is just a Python object.

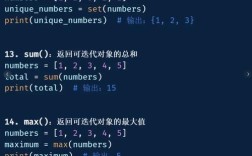

-

Lists of Dictionaries: A simple way to represent tabular data.

data = [ {'id': 1, 'product': 'Laptop', 'price': 1200}, {'id': 2, 'product': 'Mouse', 'price': 25} ] -

Pandas DataFrame: The most common in-memory datasource for data analysis in Python.

Streaming Data

For real-time data from sources like message queues.

- Kafka: Use the

confluent-kafkalibrary. - RabbitMQ: Use the

pikalibrary.

Summary Table

| Category | Common Examples | Key Python Libraries |

|---|---|---|

| File-Based | CSV, JSON, Excel, Parquet | csv, json, pandas, openpyxl, pyarrow |

| SQL Databases | SQLite, PostgreSQL, MySQL | sqlite3, psycopg2, SQLAlchemy |

| NoSQL Databases | MongoDB, Redis | pymongo, redis |

| Web APIs | REST APIs, Web Scraping | requests, beautifulsoup4, selenium |

| Cloud Storage | Amazon S3, Google Cloud Storage | boto3, google-cloud-storage |

| Big Data | Apache Spark | pyspark |

| Streaming | Kafka, RabbitMQ | confluent-kafka, pika |

Best Practices

- Use Context Managers (

withstatement): Always usewith open(...)for file operations to ensure files are closed automatically. - Choose the Right Tool: For simple analysis,

pandasis often sufficient. For complex applications or multiple database types, an ORM likeSQLAlchemyis a better choice. - Handle Errors: Always wrap network requests and database connections in

try...exceptblocks to handle potential failures gracefully. - Environment Variables: Never hardcode credentials (API keys, database passwords). Use libraries like

python-dotenvto manage them securely.