Of course! Here's a comprehensive guide on how to use Principal Component Analysis (PCA) with OpenCV in Python.

We'll cover:

- What is PCA? (A brief, intuitive explanation)

- Why use PCA in OpenCV? (The key use case: Dimensionality Reduction)

- The OpenCV PCA Class: A look at its key methods and parameters.

- Step-by-Step Code Example: A complete, runnable example with a dataset.

- Visualization: How to see the results of the PCA.

- Reconstruction: How to reconstruct the original data from the principal components.

What is PCA? (Intuitive Explanation)

Imagine you have a dataset with many features (columns). For example, you might have 100 different measurements for each person in a dataset. These features are often correlated. PCA's goal is to find a new set of features, called principal components, that are a combination of the old features.

These new components have two amazing properties:

- They are uncorrelated with each other.

- They are sorted by importance. The first principal component captures the most variance (the most information) in the data. The second captures the next most, and so on.

This allows you to reduce the dimensionality of your data. You can keep only the first 10-20 components and still retain a large percentage of the original information, which is incredibly useful for tasks like visualization, speed, and combating the "curse of dimensionality."

Why Use PCA in OpenCV?

The primary reason to use PCA in OpenCV is for Dimensionality Reduction on image data.

Images are high-dimensional. A small 100x100 grayscale image has 10,000 pixels (features). A 3-channel (RGB) 100x100 image has 30,000 features. This is a lot!

PCA helps you:

- Compress Data: Represent each image with a much smaller number of values (the coefficients of the principal components).

- Speed Up Processing: Machine learning algorithms run much faster on lower-dimensional data.

- Improve Performance: By removing noise and less important features, you can sometimes get better results from classifiers.

- Visualize Data: You can reduce 30,000 features down to 2 or 3 so you can plot them on a 2D or 3D graph.

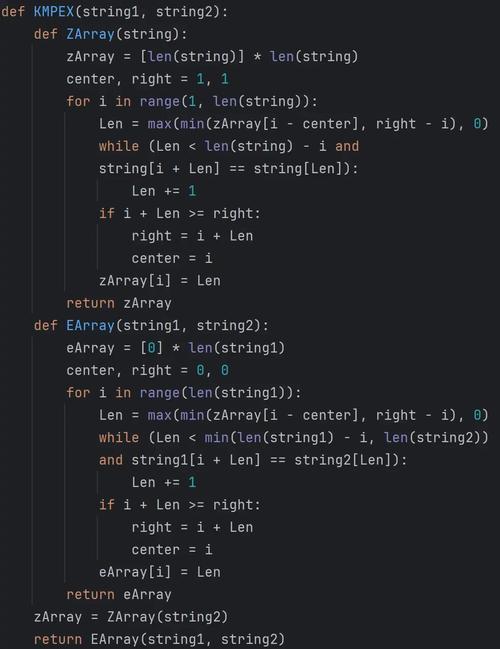

The OpenCV cv2.PCA Class

OpenCV provides the cv2.PCACompute function and the cv2.PCACompute2D function (for 2D data, like images). The most common one is cv2.PCACompute.

Key Parameters for cv2.PCACompute

mean, eigenvectors = cv2.PCACompute(data, mean, maxComponents)

data: The input data. This is crucial: OpenCV expects the data to be a single NumPy array where each row is a sample and each column is a feature. For images, this means you must "flatten" them into 1D vectors.mean: The initial mean of the data. You can passNone, and OpenCV will calculate it for you. The calculated mean will be returned, which is useful for later reconstruction.maxComponents: The maximum number of principal components to retain. If you pass0, it will compute all components. If you pass a numberk, it will return the topkcomponents.

Key Return Values

mean: The mean vector of the input data.eigenvectors: A matrix where each row is a principal component (eigenvector). The first row is the most significant component, the second is the next most, and so on.

Step-by-Step Code Example: Face Images

Let's apply PCA to a set of face images. We'll use the Olivetti Faces dataset, which is conveniently included in scikit-learn.

Goal:

- Load the face images.

- Flatten each image into a 1D vector.

- Stack all vectors into a single data matrix.

- Apply PCA to find the principal components (often called "eigenfaces").

- Visualize the top "eigenfaces".

import cv2

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import fetch_olivetti_faces

# --- 1. Load and Prepare Data ---

# Load the Olivetti Faces dataset

faces, _ = fetch_olivetti_faces(return_X_y=True, shuffle=True, random_state=42)

# The dataset is already in the correct format:

# - Each row is a flattened image (64x64 = 4096 features)

# - There are 400 images (samples)

print(f"Original data shape: {faces.shape}") # Should be (400, 4096)

# --- 2. Apply PCA ---

# We want to keep 150 principal components

num_components = 150

# Calculate the mean and eigenvectors (principal components)

# The mean is the "average face"

mean, eigenvectors = cv2.PCACompute(faces, mean=None, maxComponents=num_components)

print(f"Mean shape (average face): {mean.shape}") # (1, 4096)

print(f"Eigenvectors shape (eigenfaces): {eigenvectors.shape}") # (150, 4096)

# --- 3. Visualize the Results ---

# Helper function to display images

def show_images(images, titles=None, rows=1, cols=1, figsize=(10, 7)):

fig, axes = plt.subplots(rows, cols, figsize=figsize)

axes = axes.flatten()

for i, (img, ax) in enumerate(zip(images, axes)):

# Reshape the 1D vector back to a 2D image (64x64)

img_2d = img.reshape((64, 64))

ax.imshow(img_2d, cmap='gray')

if titles:

ax.set_title(titles[i])

ax.axis('off')

plt.tight_layout()

plt.show()

# a) Show the Average Face

print("\nDisplaying the Average Face...")

show_images(mean, titles=["Average Face"], rows=1, cols=1)

# b) Show the Top Eigenfaces

print("\nDisplaying the Top 16 Eigenfaces...")

# The eigenvectors are rows, so we take the first 16

top_eigenfaces = eigenvectors[:16]

show_images(top_eigenfaces, titles=[f"Eigenface {i+1}" for i in range(16)], rows=4, cols=4)

What the Output Shows:

- Average Face: This is the mean of all the input faces. It looks like a generic, blurry face. It represents the "average" features common to all faces in the dataset.

- Eigenfaces: These are the principal components. The first few eigenfaces capture the most significant variations in the dataset. You might see eigenfaces that highlight differences in lighting, face orientation, or the presence of glasses. They don't look like faces in a conventional sense, but rather the "building blocks" of all the faces in the dataset.

Dimensionality Reduction and Reconstruction

Now, let's see how to use these components to transform a face image into a lower-dimensional space and then reconstruct it.

# --- 4. Dimensionality Reduction and Reconstruction ---

# Let's pick one face to transform and reconstruct

test_face = faces[0]

original_face_2d = test_face.reshape((64, 64))

# Project the test face onto the principal component space

# This reduces its dimensionality from 4096 to 150

coefficients = cv2.PCAProject([test_face], mean) # Note: PCAProject expects a list of samples

print(f"\nOriginal face dimensions: {test_face.shape}")

print(f"Reduced face dimensions (coefficients): {coefficients.shape}")

# Reconstruct the face from the lower-dimensional coefficients

reconstructed_face = cv2.PCAReconstruct(coefficients, mean)

# --- 5. Visualize Original vs. Reconstructed ---

print("\nDisplaying Original vs. Reconstructed Face...")

show_images(

[original_face_2d, reconstructed_face.reshape((64, 64))],s=["Original Face", f"Reconstructed Face ({num_components} components)"],

rows=1, cols=2, figsize=(8, 4)

)

What This Shows:

- Original Face: The input image.

- Reconstructed Face: The image after being compressed to 150 numbers and then expanded back to its original size.

- You will notice that the reconstructed face is slightly blurrier than the original. This is because we discarded the less important principal components (from 151 to 4096). If you increase

num_components, the reconstruction will be more accurate, but the compression ratio will be lower.

Full Script with Visualization

Here is the complete, runnable script combining all the steps.

import cv2

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import fetch_olivetti_faces

# --- 1. Load and Prepare Data ---

print("Loading Olivetti Faces dataset...")

faces, _ = fetch_olivetti_faces(return_X_y=True, shuffle=True, random_state=42)

print(f"Data shape: {faces.shape}") # (400, 4096)

# --- 2. Apply PCA ---

num_components = 150

print(f"\nApplying PCA to find {num_components} principal components...")

mean, eigenvectors = cv2.PCACompute(faces, mean=None, maxComponents=num_components)

print(f"Mean shape: {mean.shape}, Eigenvectors shape: {eigenvectors.shape}")

# --- 3. Visualize the Average Face and Eigenfaces ---

def show_images(images, titles=None, rows=1, cols=1, figsize=(10, 7)):

fig, axes = plt.subplots(rows, cols, figsize=figsize)

if rows * cols == 1:

axes = [axes]

for i, (img, ax) in enumerate(zip(images, axes)):

img_2d = img.reshape((64, 64))

ax.imshow(img_2d, cmap='gray')

if titles:

ax.set_title(titles[i])

ax.axis('off')

plt.tight_layout()

plt.show()

print("\nDisplaying the Average Face...")

show_images(mean, titles=["Average Face"], rows=1, cols=1)

print("\nDisplaying the Top 16 Eigenfaces...")

top_eigenfaces = eigenvectors[:16]

show_images(top_eigenfaces, titles=[f"Eigenface {i+1}" for i in range(16)], rows=4, cols=4)

# --- 4. Dimensionality Reduction and Reconstruction ---

print("\nPerforming dimensionality reduction and reconstruction...")

test_face = faces[0]

original_face_2d = test_face.reshape((64, 64))

# Project to the PCA space

coefficients = cv2.PCAProject([test_face], mean)

print(f"Original dimensions: {test_face.shape} -> Reduced dimensions: {coefficients.shape}")

# Reconstruct from the PCA space

reconstructed_face = cv2.PCAReconstruct(coefficients, mean)

# --- 5. Visualize Original vs. Reconstructed ---

print("\nDisplaying Original vs. Reconstructed Face...")

show_images(

[original_face_2d, reconstructed_face.reshape((64, 64))],s=["Original Face", f"Reconstructed Face ({num_components} components)"],

rows=1, cols=2, figsize=(8, 4)

)

# --- 6. Explained Variance ---

# To see how much information is retained

variance_ratio = np.sum(eigenvectors**2, axis=1) / np.sum(faces**2)

explained_variance = np.cumsum(variance_ratio)

print(f"\nTotal variance explained by {num_components} components: {explained_variance[-1]:.2%}")

plt.figure(figsize=(8, 5))

plt.plot(np.arange(1, num_components + 1), explained_variance)'Cumulative Explained Variance by Principal Components')

plt.xlabel('Number of Principal Components')

plt.ylabel('Cumulative Explained Variance')

plt.grid(True)

plt.show()

This final plot is extremely useful. It shows you how much of the original data's information is captured as you add more principal components, helping you choose a good num_components value for your specific application.