Of course! Here's a comprehensive guide to using NSQ channels with Python, covering the core concepts, the official Python client, and a complete practical example.

What is an NSQ Channel? (The Core Concept)

First, it's crucial to understand the difference between a Topic and a Channel in NSQ.

- Topic: A logical category or "feed" of messages. For example,

user-signups,order-placed, orlog-messages. Producers publish messages to a topic. - Channel: A logical pipeline for consuming messages from a topic. This is the key to scaling and distributing work.

Think of it like this:

- A Topic is a television broadcast signal.

- A Channel is a specific TV channel (e.g., CNN, BBC, ESPN) that you "tune into" to watch that broadcast.

You can have multiple consumers (TVs) all subscribed to the same channel (e.g., CNN), and they will all receive the same broadcast (messages). However, if you want to distribute the work of processing messages, you create multiple channels.

The Golden Rule of NSQ Channels:

Each message in a topic is delivered to every channel that is subscribed to that topic.

But within a single channel, messages are delivered to only one consumer at a time. This is the foundation of NSQ's load balancing.

Why is this powerful?

- Parallelism & Load Balancing: If processing a single message is slow, you can create multiple consumers for the same channel. NSQ will automatically distribute the messages among them, allowing you to process messages in parallel.

- Separation of Concerns: You can have different "channels" for different purposes, even from the same topic.

channel="real-time-processing": For a fast, in-memory analytics engine.channel="persistent-storage": For a slower database writer.channel="reprocessing": For a batch job that re-processes old data.

All consumers on these channels will get a copy of every message from the topic, but they can process it independently.

The Official Python Client: nsqio

The most popular and well-maintained Python client for NSQ is nsqio. You can install it via pip:

pip install nsqio

This library provides two main classes for consuming:

Nsqd: A low-level connection to a singlensqdnode. Useful for simple scripts or when you know exactly which node to connect to.NsqLookupd: The recommended approach. It connects to one or morensqlookupdinstances to automatically discover whichnsqdnodes are publishing to a specific topic and handles failover and re-balancing of connections.

Practical Example: Producer and Consumer

Let's build a complete example. We'll need:

- An NSQ setup (using Docker is easiest).

- A Python producer to send messages.

- Two Python consumers subscribed to the same channel to demonstrate load balancing.

Step 1: Start NSQ with Docker

Open a terminal and run the following command. This starts nsqlookupd, nsqd, and nsqadmin (the web UI).

docker run --rm -p 4161:4161 -p 4160:4160 -p 4171:4171 nsqio/nsq /nsqlookupd --broadcast-address=localhost

4161:nsqlookupdHTTP port4160:nsqdTCP port (for producers)4171:nsqadminweb UI port

You can visit http://localhost:4171 to see the NSQ admin interface.

Step 2: The Producer (producer.py)

This script will connect to nsqd and send messages to a topic named my_topic.

# producer.py

import nsq

import time

import random

# --- Configuration ---

NSQD_TCP_ADDRESS = '127.0.0.1:4160'

TOPIC_NAME = 'my_topic'

MESSAGE_COUNT = 10

def main():

"""

Connects to nsqd and publishes messages to a topic.

"""

print(f"Connecting to NSQ at {NSQD_TCP_ADDRESS}...")

# The Nsqd class handles the connection and writing to the topic

writer = nsq.Nsqd(address=NSQD_TCP_ADDRESS)

try:

# Connect to the topic. It will be created if it doesn't exist.

# The `defer` parameter is for message priority (not needed here).

topic = writer.pub_topic(TOPIC_NAME)

print(f"Publishing {MESSAGE_COUNT} messages to topic '{TOPIC_NAME}'...")

for i in range(MESSAGE_COUNT):

# Create a message

message_text = f"This is message number {i}"

# Publish the message

# .publish() is non-blocking. It returns immediately.

topic.publish(message_text.encode('utf-8'))

print(f" -> Published: {message_text}")

# Simulate some delay

time.sleep(random.uniform(0.1, 0.5))

print("Finished publishing messages.")

except Exception as e:

print(f"An error occurred: {e}")

finally:

# It's good practice to close the connection

if 'topic' in locals():

topic.close()

if __name__ == '__main__':

main()

Step 3: The Consumers (consumer.py)

We will run two instances of this script. They will both connect to the same topic (my_topic) and the same channel (my_channel). NSQ will automatically load balance the messages between them.

# consumer.py

import nsq

import time

import signal

import sys

# --- Configuration ---

LOOKUPD_HTTP_ADDRESS = '127.0.0.1:4161'

TOPIC_NAME = 'my_topic'

CHANNEL_NAME = 'my_channel' # Both consumers will use the SAME channel name

# Global variable to allow for graceful shutdown

running = True

def handle_message(message):

"""

This is the callback function that processes each message.

"""

# The message body is a bytes object, so we decode it

print(f"[{message.attempts}] Received: {message.body.decode('utf-8')}")

# --- IMPORTANT ---

# You MUST call message.finish() to successfully acknowledge the message.

# If you don't, or if an exception occurs, NSQ will re-queue the message

# after the configured timeout.

# Simulate some work

time.sleep(1)

# If the processing is successful, finish the message

message.finish()

print(" -> Finished processing")

def signal_handler(sig, frame):

"""Handle Ctrl+C to shut down gracefully."""

global running

print("\nShutdown signal received. Stopping consumer...")

running = False

def main():

"""

Connects to nsqlookupd and starts consuming messages from a topic/channel.

"""

global running

# Register signal handler for graceful shutdown

signal.signal(signal.SIGINT, signal_handler)

print(f"Starting consumer for topic='{TOPIC_NAME}', channel='{CHANNEL_NAME}'...")

print(f"Connecting to nsqlookupd at {LOOKUPD_HTTP_ADDRESS}...")

# The NsqLookupd class is the recommended way to consume.

# It automatically discovers nsqd nodes and handles reconnections.

r = nsq.Reader(

message_handler=handle_message,

nsqd_addresses=[LOOKUPD_HTTP_ADDRESS],

lookupd_http_addresses=[LOOKUPD_HTTP_ADDRESS],

topic=TOPIC_NAME,

channel=CHANNEL_NAME,

# Other useful options:

# max_in_flight: Number of messages to process concurrently.

# max_backoff_duration: Max time to wait between retries.

# max_requeue_delay: Max time to wait before requeuing a failed message.

)

print("Consumer is running. Press Ctrl+C to stop.")

# The reader runs in its own thread. We just need to keep the main thread alive.

while running:

time.sleep(0.1)

# Cleanly stop the reader

r.stop()

print("Consumer stopped.")

if __name__ == '__main__':

main()

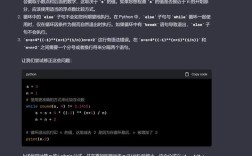

Step 4: Run the Example

-

Start NSQ (if you haven't already).

-

Open two separate terminal windows. In each one, run the consumer:

python consumer.py

You will see output like this in both windows, indicating they are connected and waiting for messages:

Starting consumer for topic='my_topic', channel='my_channel'... Connecting to nsqlookupd at 127.0.0.1:4161... Consumer is running. Press Ctrl+C to stop. -

Open a third terminal window and run the producer:

python producer.py

You will see it publishing messages.

-

Observe the output in the consumer terminals!

You will notice that the messages are being distributed between the two consumers. One consumer might get messages 0, 2, 4, 6, 8, while the other gets 1, 3, 5, 7, 9. This is the load balancing in action!

Terminal 1 (Consumer A):

[1] Received: This is message number 0 -> Finished processing [1] Received: This is message number 2 -> Finished processing ...Terminal 2 (Consumer B):

[1] Received: This is message number 1 -> Finished processing [1] Received: This is message number 3 -> Finished processing ...

This perfectly demonstrates how multiple consumers on the same channel share the workload of processing messages from a single topic.