Of course! Let's break down the Softmax Loss function, also known as Categorical Cross-Entropy Loss, in detail and then provide a complete, runnable Python implementation from scratch and using popular libraries like PyTorch and TensorFlow.

What is Softmax Loss?

The Softmax Loss is a combination of two key concepts:

- Softmax Function: It's an activation function used in the final layer of a neural network for multi-class classification. It takes a vector of raw scores (logits) from the network and converts them into a probability distribution. Each element in the output vector represents the probability that the input belongs to a particular class.

- Categorical Cross-Entropy Loss: This is a loss function that measures the difference between the true probability distribution (the one-hot encoded labels) and the predicted probability distribution (the output of the Softmax function). The goal of training is to minimize this difference.

Why use them together?

- Softmax makes the model's output interpretable as probabilities.

- Cross-Entropy is a very effective "penalty" function. When the model is confident and correct (predicts a high probability for the true class), the loss is low. When the model is wrong or uncertain, the loss is high.

The Mathematical Formulation

Let's define the terms:

- $z$: A vector of raw scores (logits) from the last layer of the network, with length $C$ (number of classes).

- $a$: The output vector after applying the Softmax function, also of length $C$. $a_i$ is the predicted probability for class $i$.

- $y$: The true label, represented as a one-hot encoded vector. For a single example, $y_i = 1$ for the correct class and $y_i = 0$ for all others.

Step 1: The Softmax Function

The Softmax function for a single class $i$ is defined as:

$$ a_i = \text{softmax}(z_i) = \frac{e^{zi}}{\sum{j=1}^{C} e^{z_j}} $$

Where:

- $e^{z_i}$ is the exponential of the score for class $i$.

- The denominator is the sum of exponentials for all class scores. This ensures that all $a_i$ values sum to 1, making them a valid probability distribution.

Step 2: The Categorical Cross-Entropy Loss

The loss for a single training example is calculated as:

$$ L = -\sum_{i=1}^{C} y_i \log(a_i) $$

Because the true label $y$ is one-hot encoded, this simplifies dramatically. Only the term where $y_i = 1$ (the correct class) contributes to the sum. So, the formula becomes:

$$ L = -\log(a_{\text{true}}) $$

Where $a_{\text{true}}$ is the predicted probability assigned to the correct class.

Intuition: If the model assigns a high probability (close to 1) to the correct class, $\log(a{\text{true}})$ will be close to 0, and the loss $L$ will be close to 0. If the model assigns a low probability (close to 0) to the correct class, $\log(a{\text{true}})$ will be a large negative number, and the loss $L$ will be a large positive number.

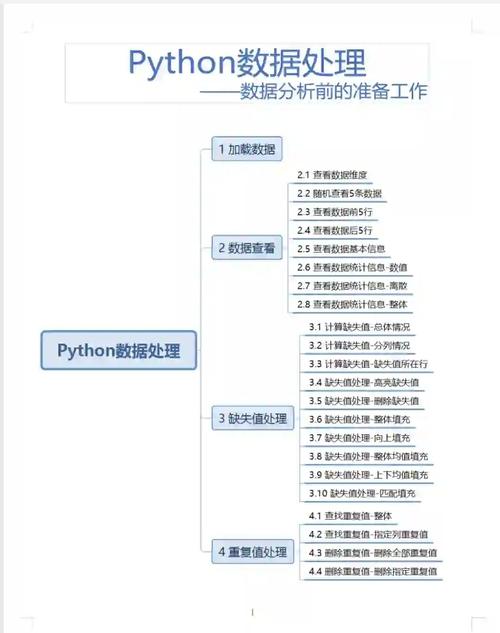

Python Implementation

Here are three ways to implement it:

- From Scratch (NumPy): Great for understanding the mechanics.

- PyTorch: The standard way in deep learning research.

- TensorFlow/Keras: Also very common, especially in production.

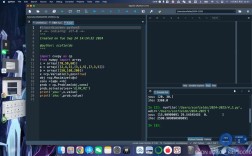

A. From Scratch with NumPy

This implementation follows the mathematical formulas directly.

import numpy as np

def softmax(x):

"""

Computes the softmax for each row of the input x.

Your code should work for a row vector and also for

matrices of shape (n, m).

"""

# Subtract max for numerical stability

# This shifts the values so the highest one is 0, preventing large exponents

e_x = np.exp(x - np.max(x, axis=-1, keepdims=True))

return e_x / e_x.sum(axis=-1, keepdims=True)

def softmax_loss(logits, labels):

"""

Computes the Softmax Loss (Categorical Cross-Entropy).

Args:

logits (np.ndarray): Raw output scores from the model, shape (N, C)

labels (np.ndarray): True labels as one-hot vectors, shape (N, C)

Returns:

float: The average loss over the batch.

"""

# Get the number of samples in the batch

N = logits.shape[0]

# Step 1: Apply softmax to the logits to get probabilities

probabilities = softmax(logits)

# Step 2: Calculate the loss for each sample

# We use a small epsilon to avoid log(0) which is undefined

epsilon = 1e-12

loss_per_sample = -np.sum(labels * np.log(probabilities + epsilon), axis=1)

# Step 3: Return the average loss over the batch

average_loss = np.sum(loss_per_sample) / N

return average_loss

# --- Example Usage ---

# Let's say we have a batch of 3 samples and 4 classes

N = 3 # Number of samples

C = 4 # Number of classes

# Raw scores (logits) from a model's final layer

logits = np.array([

[2.0, 1.0, 0.1, 0.2], # Model is most confident in class 0

[0.5, 2.5, 0.3, 0.1], # Model is most confident in class 1

[0.1, 0.2, 5.0, 0.1] # Model is most confident in class 2

])

# True labels as one-hot encoded vectors

labels = np.array([

[1, 0, 0, 0], # True class for sample 0 is 0

[0, 1, 0, 0], # True class for sample 1 is 1

[0, 0, 1, 0] # True class for sample 2 is 2

])

# Calculate the loss

loss = softmax_loss(logits, labels)

print(f"Logits:\n{logits}\n")

print(f"Labels (one-hot):\n{labels}\n")

print(f"Calculated Softmax Loss: {loss:.4f}")

# --- Verification with a "bad" prediction ---

# Let's make the model very wrong on the first sample

bad_logits = np.array([

[-2.0, -1.0, -0.1, -0.2], # Model is very wrong for sample 0

[0.5, 2.5, 0.3, 0.1],

[0.1, 0.2, 5.0, 0.1]

])

bad_loss = softmax_loss(bad_logits, labels)

print(f"\nCalculated Softmax Loss for 'bad' prediction: {bad_loss:.4f}")

print("(Notice how the loss is much higher)")

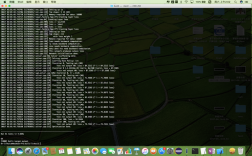

B. Using PyTorch

PyTorch provides highly optimized, numerically stable implementations of these functions. nn.CrossEntropyLoss conveniently combines LogSoftmax and NLLLoss (Negative Log Likelihood Loss) into a single, efficient class. You should pass the raw logits directly to it; it handles the softmax internally.

import torch

import torch.nn as nn

# PyTorch's CrossEntropyLoss is the standard way to do this

# It combines LogSoftmax and NLLLoss for numerical stability

# IMPORTANT: You pass the raw logits, not the softmax probabilities

criterion = nn.CrossEntropyLoss()

# --- Example Usage ---

# Same data as before, but converted to PyTorch tensors

logits_torch = torch.tensor([

[2.0, 1.0, 0.1, 0.2],

[0.5, 2.5, 0.3, 0.1],

[0.1, 0.2, 5.0, 0.1]

], dtype=torch.float32)

# For CrossEntropyLoss, labels can be class indices (more common)

# or one-hot encoded vectors. Let's use indices this time.

labels_torch = torch.tensor([0, 1, 2], dtype=torch.long)

# Calculate the loss

loss_pytorch = criterion(logits_torch, labels_torch)

print(f"PyTorch Logits Tensor:\n{logits_torch}\n")

print(f"PyTorch Labels Tensor (indices): {labels_torch}\n")

print(f"Calculated PyTorch Loss: {loss_pytorch.item():.4f}")

# The result should be identical to our NumPy implementation

print(f"\nDifference from NumPy loss: {abs(loss_pytorch.item() - loss):.6f}")

C. Using TensorFlow/Keras

TensorFlow/Keras also provides a convenient function. tf.keras.losses.CategoricalCrossentropy works with one-hot encoded labels. Similar to PyTorch, it's best practice to pass the raw logits.

import tensorflow as tf

# Keras provides a pre-built loss function

# It's designed to be numerically stable

criterion_keras = tf.keras.losses.CategoricalCrossentropy(from_logits=True)

# The 'from_logits=True' argument tells Keras that the input

# is raw scores, not probabilities. It will apply the softmax

# internally for better numerical stability.

# --- Example Usage ---

# Same data as before, but converted to TensorFlow tensors

logits_tf = tf.constant([

[2.0, 1.0, 0.1, 0.2],

[0.5, 2.5, 0.3, 0.1],

[0.1, 0.2, 5.0, 0.1]

], dtype=tf.float32)

labels_tf = tf.constant([

[1, 0, 0, 0],

[0, 1, 0, 0],

[0, 0, 1, 0]

], dtype=tf.float32)

# Calculate the loss

loss_tf = criterion_keras(labels_tf, logits_tf)

print(f"TensorFlow Logits Tensor:\n{logits_tf}\n")

print(f"TensorFlow Labels Tensor (one-hot):\n{labels_tf}\n")

print(f"Calculated TensorFlow Loss: {loss_tf.numpy():.4f}")

# The result should be identical to our NumPy implementation

print(f"\nDifference from NumPy loss: {abs(loss_tf.numpy() - loss):.6f}")

Summary and Key Takeaways

| Feature | NumPy (From Scratch) | PyTorch | TensorFlow/Keras |

|---|---|---|---|

| Purpose | Understanding the math | Building neural networks | Building neural networks |

| Input | Logits, One-hot labels | Logits, Class indices | Logits, One-hot labels |

| Softmax | Must be applied manually | Handled internally by nn.CrossEntropyLoss |

Handled internally when from_logits=True |

| Numerical Stability | Manual np.max(x) shift is good, but not perfect |

Excellent (built-in) | Excellent (built-in) |

| Recommendation | For learning only. | For research and development. | For production and deployment. |