Of course! Imputing missing values using the k-Nearest Neighbors (KNN) algorithm is a powerful and popular technique in Python. It's often more effective than simpler methods like mean or median imputation because it uses the "information" from other similar data points to make a more educated guess.

Here's a comprehensive guide covering the concept, implementation, and best practices.

The Concept: How KNN Imputation Works

The core idea behind KNN imputation is to find the k most similar (or "nearest") instances to the one with the missing value and then use the values from those neighbors to fill in the gap.

Step-by-Step Process:

- Identify Missing Value: Find the cell with the missing value (e.g., the value for

AgeforPerson A). - Calculate Distances: For every other row in the dataset (that doesn't have a missing value in the same column), calculate the distance between that row and

Person A. The distance is typically calculated using only the columns that have values for both rows (i.e., ignoring other missing values). - Find Neighbors: Select the

krows with the smallest distances toPerson A. These are the "k-nearest neighbors". - Impute the Value: Use the values from the

kneighbors to fill in the missing value forPerson A. The most common strategies are:- Mean: The average of the neighbors' values.

- Median: The median of the neighbors' values.

- Mode (for categorical): The most frequent value among the neighbors.

Key Consideration: The distance calculation is heavily influenced by the scale of your features. A feature with a large range (e.g., income: 30,000-150,000) will dominate the distance calculation over a feature with a small range (e.g., age: 20-80). Therefore, scaling your data is a critical preprocessing step before KNN imputation.

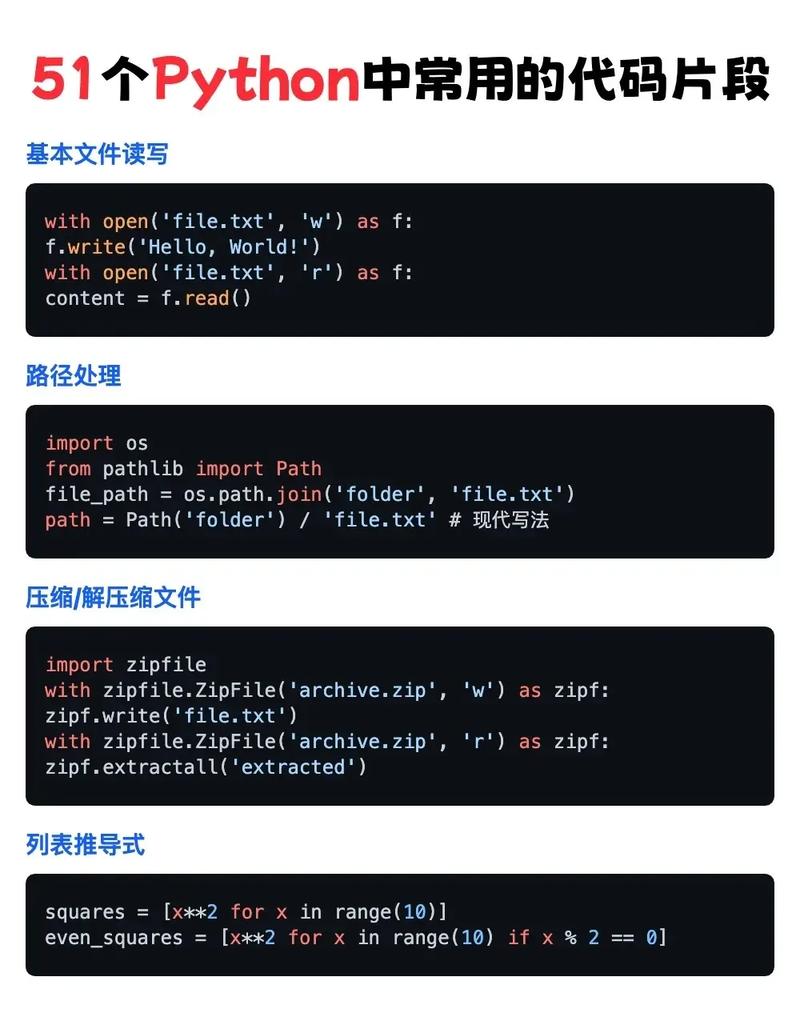

Implementation in Python using scikit-learn

The most common and user-friendly way to perform KNN imputation in Python is with the KNNImputer class from the scikit-learn library.

Setup

First, make sure you have the necessary libraries installed:

pip install numpy pandas scikit-learn

Example: Step-by-Step Code

Let's walk through a complete example.

import numpy as np

import pandas as pd

from sklearn.impute import KNNImputer

from sklearn.preprocessing import MinMaxScaler

# 1. Create a sample DataFrame with missing values

data = {

'Age': [25, 45, 35, 50, 23, 33, np.nan, 40],

'Salary': [50000, 80000, 60000, 120000, 48000, 65000, 75000, np.nan],

'Years_Experience': [2, 20, 7, 25, 1, 5, 12, 15],

'Department': ['HR', 'IT', 'IT', 'Finance', 'HR', 'IT', 'Finance', 'IT']

}

df = pd.DataFrame(data)

print("Original DataFrame:")

print(df)

print("\n" + "="*40 + "\n")

# 2. Separate numerical and categorical features

# KNNImputer works only on numerical data.

numerical_cols = ['Age', 'Salary', 'Years_Experience']

categorical_cols = ['Department']

df_numerical = df[numerical_cols]

df_categorical = df[categorical_cols]

# 3. Scale the numerical data

# This is a crucial step for KNN to perform well.

# We use MinMaxScaler to scale features to a range [0, 1].

scaler = MinMaxScaler()

df_numerical_scaled = scaler.fit_transform(df_numerical)

# 4. Initialize and apply the KNN Imputer

# n_neighbors=3 is a common starting point.

imputer = KNNImputer(n_neighbors=3)

# The imputer works on numpy arrays

df_numerical_imputed_scaled = imputer.fit_transform(df_numerical_scaled)

# 5. Inverse the scaling to get the data back to its original scale

df_numerical_imputed = scaler.inverse_transform(df_numerical_imputed_scaled)

# 6. Create a new DataFrame with the imputed numerical data

df_imputed_numerical = pd.DataFrame(df_numerical_imputed, columns=numerical_cols)

# 7. Combine the imputed numerical data with the original categorical data

df_final = pd.concat([df_imputed_numerical, df_categorical], axis=1)

print("DataFrame after KNN Imputation:")

print(df_final.round(2)) # Rounding for cleaner output

Explanation of the Code

- Create DataFrame: We create a

pandasDataFrame with someNaN(Not a Number) values to represent missing data. - Separate Features:

KNNImputercan only handle numerical data. If you have categorical columns, you must separate them before imputation and then re-combine them later. - Scale Data: We use

MinMaxScalerto scale our numerical features. This ensures that features likeSalarydon't unfairly influence the distance calculation compared toAge. - Initialize Imputer: We create an instance of

KNNImputer. The most important parameter isn_neighbors, which you should tune. A common practice is to use an odd number to avoid ties. - Fit and Transform: The

fit_transform()method does two things:fit(): Learns the parameters from the data (i.e., it finds the neighbors for each missing value based on the scaled data).transform(): Applies the learned logic to fill in the missing values.

- Inverse Scaling: The imputation was done on the scaled data. To get the values back to their original, interpretable scale, we use

inverse_transform(). - Combine Data: Finally, we merge the now-complete numerical data with the original categorical data to get our final, imputed DataFrame.

Handling Categorical Data

As mentioned, KNNImputer is for numerical data. A common strategy for categorical data is to use mode imputation (filling with the most frequent category).

Here's how you can handle a mixed-type dataset:

# Continuing from the previous example...

# Let's say 'Department' also had a missing value

df.loc[7, 'Department'] = np.nan

print("\nDataFrame with a missing categorical value:")

print(df)

# Impute numerical columns (as before)

df_numerical_imputed = pd.DataFrame(

scaler.inverse_transform(imputer.fit_transform(df_numerical_scaled)),

columns=numerical_cols

)

# Impute categorical column using mode

df_categorical_imputed = df_categorical.copy()

for col in categorical_cols:

mode_val = df[col].mode()[0] # Get the most frequent value

df_categorical_imputed[col].fillna(mode_val, inplace=True)

# Combine

df_final_mixed = pd.concat([df_numerical_imputed, df_categorical_imputed], axis=1)

print("\nFinal DataFrame with mixed-type imputation:")

print(df_final_mixed.round(2))

For more advanced categorical imputation, you could explore libraries like sklearn.impute.SimpleImputer with strategy='most_frequent'.

Advantages and Disadvantages

Advantages

- More Accurate: Leverages relationships between features, often providing a better estimate than simple mean/median.

- Versatile: Can be used for both regression (predicting a continuous value) and classification (predicting a category) tasks if you adapt the approach.

- Preserves Data Distribution: By using local information, it can better preserve the original distribution of the data compared to global imputation methods.

Disadvantages

- Computationally Expensive: For very large datasets, calculating distances between every point can be slow and memory-intensive.

- Sensitive to

k: The choice ofkcan significantly impact the results. A smallkcan be noisy, while a largekcan oversmooth the imputed values. - Requires Complete Rows for Calculation: If a row has many missing values, it might be difficult or impossible to find neighbors, leading to errors or poor imputation.

- Assumes Feature Correlation: KNN imputation assumes that features are correlated. If they are not, the imputation may not be meaningful.

Alternatives to KNN Imputation

- Simple Imputation (

sklearn.impute.SimpleImputer):strategy='mean','median','most_frequent'. Fast and simple, but ignores feature relationships.

- Iterative Imputation (

sklearn.impute.IterativeImputer):A more advanced model-based imputer. It builds a regression model for each feature with missing values, using all other features as predictors. It's often more powerful than KNN but also more complex and computationally demanding.

- Multivariate Imputation by Chained Equations (MICE):

- A sophisticated and widely-used statistical technique. Similar to

IterativeImputer, it iteratively imputes missing values using a series of regression models. Thestatsmodelslibrary has an implementation.

- A sophisticated and widely-used statistical technique. Similar to

Summary: When to Use KNN Imputation?

- Use KNN Imputation when: You have a dataset with a moderate number of samples, your features are correlated, and you want a more accurate imputation than simple methods.

- Avoid KNN Imputation when: You have a very large dataset (where performance is critical), your features are not correlated, or you have many missing values in the same rows. In these cases,

SimpleImputerorIterativeImputermight be better choices.