Of course! Let's dive deep into Python WebDriver. This is one of the most powerful and popular tools for web automation, testing, and scraping.

What is a WebDriver?

At its core, a WebDriver is a browser automation framework. It allows a program (your Python script) to control a web browser—like Chrome, Firefox, or Safari—as if a real person were using it.

Think of it as a bridge:

- Your Python Script: Gives commands ("Open this URL," "Click this button," "Get the text from that element").

- WebDriver: Translates these commands into actions the web browser can understand.

- Web Browser: Executes the actions and sends back the results (e.g., the page's HTML, a screenshot, a success message).

The official specification for this communication is called the WebDriver Protocol. The most popular implementation is Selenium WebDriver.

The Key Player: Selenium

When people talk about "Python WebDriver," they are almost always referring to the Selenium library. Selenium is a powerful suite of tools specifically designed for automating web browsers.

Selenium WebDriver is the component of Selenium that provides the Python bindings to control the browser.

Why Use Selenium WebDriver?

- Web Testing: Automate user interface (UI) tests. You can write scripts that simulate user interactions (clicking, typing, scrolling) to verify that your web application works as expected.

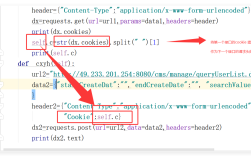

- Web Scraping: When a website relies heavily on JavaScript to load content, simple

requestsandBeautifulSouplibraries won't work. Selenium can render the full page, including all the dynamic content, before you scrape it. - Automation: Automate repetitive tasks on the web. For example:

- Automatically filling out and submitting forms.

- Logging into websites.

- Checking prices on an e-commerce site.

- Taking regular screenshots of a webpage.

Getting Started: A Step-by-Step Guide

Step 1: Install the Selenium Library

First, you need to install the Selenium package using pip.

pip install selenium

Step 2: Download a WebDriver

Your Python script needs a "driver" to communicate with your chosen browser. You must download the driver that matches your browser and version.

- Google Chrome: ChromeDriver

- Mozilla Firefox: GeckoDriver

- Microsoft Edge: EdgeDriver

- Safari: Comes pre-installed with macOS (no download needed).

Important: The version of the driver should generally match the version of your browser.

Step 3: Set Up Your Environment

It's a best practice to keep your driver in a known location, like a drivers folder in your project. For example, your project structure might look like this:

my_project/

├── my_script.py

└── drivers/

└── chromedriver.exe (or chromedriver on macOS/Linux)Step 4: Write Your First Script

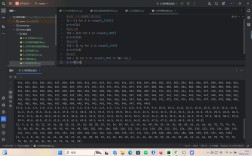

Let's write a simple script that opens Google, searches for "Python," and prints the title of the results page.

# my_script.py

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

from selenium.webdriver.chrome.service import Service

import time

# --- 1. Set up the WebDriver ---

# Point to the location of your chromedriver

# On Windows, it might be 'C:/path/to/your/drivers/chromedriver.exe'

# On macOS/Linux, it might be '/Users/youruser/path/to/drivers/chromedriver'

# Or, if chromedriver is in your system's PATH, you can just use 'ChromeDriverManager'

# For simplicity, we'll use the Service object.

# For modern versions, it's better to use a Service object.

service = Service(executable_path='./drivers/chromedriver')

# Initialize the Chrome WebDriver

driver = webdriver.Chrome(service=service)

# --- 2. Interact with the Web ---

try:

# Open a URL

driver.get("https://www.google.com")

# Find the search box element by its NAME attribute

search_box = driver.find_element(By.NAME, "q")

# Type "Python" into the search box and press Enter

search_box.send_keys("Python")

search_box.send_keys(Keys.RETURN)

# Wait for the results page to load (not ideal, but simple for demonstration)

# A better approach is WebDriverWait, which we'll cover later.

time.sleep(2)

# Get the title of the current page

page_title = driver.title

print(f"Page Title is: {page_title}")

# Verify the title contains "Python"

assert "Python" in page_title

finally:

# --- 3. Clean up ---

# Close the browser window

driver.quit()

To run this script:

python my_script.py

You should see a Chrome window open, perform the search, and then close automatically.

Core Concepts: Finding Elements

The most important part of web automation is finding the HTML element you want to interact with (a button, a link, an input field). Selenium provides several ways to do this using find_element (for a single element) or find_elements (for a list of elements).

The By class contains the locator strategies:

| Locator Strategy | Description | Example |

|---|---|---|

By.ID |

Finds an element by its unique id attribute. |

driver.find_element(By.ID, "username") |

By.NAME |

Finds an element by its name attribute. |

driver.find_element(By.NAME, "q") |

By.CLASS_NAME |

Finds an element by its class attribute. |

driver.find_element(By.CLASS_NAME, "search-btn") |

By.TAG_NAME |

Finds an element by its HTML tag name. | driver.find_element(By.TAG_NAME, "h1") |

By.XPATH |

Finds an element using a path-like expression in the HTML document. Very powerful. | driver.find_element(By.XPATH, "//div[@class='header']/a") |

By.CSS_SELECTOR |

Finds an element using a CSS selector. Very common and powerful. | driver.find_element(By.CSS_SELECTOR, "div.header > a") |

Best Practice: Use the most stable and unique locator. ID is best, followed by Name, CSS Selector, and then XPath.

Handling Waits (Crucial for Modern Websites)

Modern websites load content dynamically with JavaScript. If your script tries to find an element before it exists, it will fail. Using time.sleep() works but is inefficient and brittle. The better way is to use Explicit Waits.

An explicit wait tells your script to wait for a certain condition to be met (e.g., an element is visible, clickable, or present) before proceeding.

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

# ... (driver setup) ...

try:

driver.get("https://www.google.com")

# Wait up to 10 seconds for the search box to be visible

search_box = WebDriverWait(driver, 10).until(

EC.visibility_of_element_located((By.NAME, "q"))

)

search_box.send_keys("Selenium with explicit waits")

search_box.send_keys(Keys.RETURN)

# Wait for the results page title to be updated

WebDriverWait(driver, 10).until(

EC.title_contains("Selenium with explicit waits")

)

print("Page Title:", driver.title)

finally:

driver.quit()

Best Practices

-

Use Page Object Model (POM): For large test suites, don't write all your locators and actions in one giant script. Instead, create a class for each page (e.g.,

LoginPage,HomePage). This makes your code cleaner, more reusable, and easier to maintain. -

Use

driver.quit(): Always usedriver.quit()in afinallyblock to ensure the browser is closed, even if your script encounters an error. This prevents orphaned browser processes. -

Use Relative Locators: If an element doesn't have a good

idorname, you can find it relative to another element. Selenium 4 introduced this feature.# Find a button that is above a specific text element password_label = driver.find_element(By.ID, "password-label") submit_button = driver.find_element_with_label(password_label, "above")

-

Headless Mode: For running scripts on a server without a display, you can run the browser in "headless" mode.

from selenium.webdriver.chrome.options import Options options = Options() options.add_argument("--headless") # Run in headless mode options.add_argument("--disable-gpu") driver = webdriver.Chrome(service=service, options=options)

Alternatives to Selenium

While Selenium is the king, other tools exist for specific use cases:

- Playwright: A newer, modern automation library from Microsoft. It's known for being faster, more reliable, and having better auto-waiting features. It supports Chromium, Firefox, and WebKit.

- Pyppeteer: A Python port of Puppeteer (a Node.js library). It controls headless Chrome/Chromium and is excellent for scraping and generating PDFs/screenshots.

- Requests + BeautifulSoup: For simple, static websites, this combination is much faster and lighter than Selenium. It's the go-to for scraping if JavaScript isn't involved.