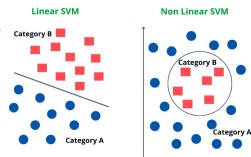

Of course! The term "regularize" in Python, especially in the context of data science and machine learning, refers to a set of techniques used to prevent overfitting in models.

What is Overfitting?

Imagine you're teaching a student to recognize cats. If you only show them 10 pictures of your specific cat, they might become an expert at identifying that exact cat but fail to recognize a different cat they've never seen before. This is overfitting.

In machine learning, an overfit model learns the training data too well, including its noise and random fluctuations. As a result, it performs poorly on new, unseen data. It has low bias but high variance.

Goal of Regularization: To create models that are simpler and more generalizable. They might not be perfect on the training data, but they will perform much better on new data. It's a trade-off between fitting the training data well (low bias) and keeping the model simple (low variance).

The Core Idea of Regularization

Most regularization techniques work by adding a penalty to the model's "complexity". This penalty is typically added to the loss function that the model tries to minimize.

Modified Loss Function = Original Loss + Penalty Term

By adding this penalty, the model is encouraged to:

- Use smaller coefficient values for its features.

- Use fewer features (in some techniques).

This forces the model to focus on the most important patterns and ignore the noise.

Common Regularization Techniques in Python

Here are the most popular regularization methods, primarily used for linear models and neural networks.

L1 Regularization (Lasso - Least Absolute Shrinkage and Selection Operator)

L1 regularization adds a penalty equal to the absolute value of the magnitude of coefficients.

- Penalty Term:

λ * Σ|weights| - Effect: It can force some of the feature coefficients to become exactly zero. This makes L1 regularization great for feature selection—it automatically identifies and discards the least important features.

- When to use: When you suspect that only a small number of your features are actually important, or when you need a more interpretable model with fewer features.

Python Implementation (using scikit-learn):

from sklearn.linear_model import Lasso

from sklearn.datasets import make_regression

from sklearn.model_selection import train_test_split

# Generate sample data

X, y = make_regression(n_samples=100, n_features=10, noise=25, random_state=42)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# Create and train a Lasso model

# alpha is the regularization strength (lambda). Larger alpha means stronger penalty.

lasso_model = Lasso(alpha=0.1)

lasso_model.fit(X_train, y_train)

# Check the coefficients

print("Lasso Coefficients:", lasso_model.coef_)

# Notice how some coefficients are forced to be exactly 0.

L2 Regularization (Ridge - Ridge Regression)

L2 regularization adds a penalty equal to the square of the magnitude of coefficients.

- Penalty Term:

λ * Σ(weights²) - Effect: It shrinks the coefficients towards zero, but it rarely makes them exactly zero. It tends to distribute the penalty among all coefficients, making them smaller but non-zero. This is good when most features are relevant.

- When to use: When you have many features that are all potentially useful, and you want to prevent any single one from having too much influence.

Python Implementation (using scikit-learn):

from sklearn.linear_model import Ridge

# Create and train a Ridge model

# alpha is the regularization strength (lambda).

ridge_model = Ridge(alpha=0.1)

ridge_model.fit(X_train, y_train)

# Check the coefficients

print("Ridge Coefficients:", ridge_model.coef_)

# Notice how all coefficients are small, but none are exactly 0.

Elastic Net (Combination of L1 and L2)

Elastic Net is a hybrid that combines both L1 and L2 penalties. It's useful when you have many correlated features.

- Penalty Term:

λ1 * Σ|weights| + λ2 * Σ(weights²) - Effect: It inherits the benefits of both L1 (feature selection) and L2 (handling correlated features gracefully).

- Parameters:

alpha: The overall strength of the regularization.l1_ratio: The mix between L1 and L2. A value of0is pure L1 (Lasso), and0is pure L2 (Ridge).

- When to use: When you have a large number of features, some of which may be correlated, and you want to perform feature selection.

Python Implementation (using scikit-learn):

from sklearn.linear_model import ElasticNet

# Create and train an Elastic Net model

# alpha is the overall strength, l1_ratio is the mix between L1 and L2.

elastic_model = ElasticNet(alpha=0.1, l1_ratio=0.5) # 50% L1, 50% L2

elastic_model.fit(X_train, y_train)

# Check the coefficients

print("Elastic Net Coefficients:", elastic_model.coef_)

Regularization in Neural Networks (Deep Learning)

In deep learning, overfitting is an even bigger challenge due to the massive number of parameters. Here are the most common forms of regularization:

L1/L2 on Weights

This is the direct equivalent of what we saw in linear models. You add an L1 or L2 penalty to the loss function based on the weights of the network layers. This is built into most deep learning frameworks.

Python Implementation (using TensorFlow/Keras):

import tensorflow as tf

from tensorflow.keras import layers, models, regularizers

# Create a simple model with L2 regularization on the Dense layer

model = models.Sequential([

layers.Dense(64, activation='relu',

kernel_regularizer=regularizers.l2(0.001)), # L2 penalty with lambda=0.001

layers.Dense(64, activation='relu',

kernel_regularizer=regularizers.l1(0.001)), # L1 penalty

layers.Dense(1)

])

model.compile(optimizer='adam', loss='mse')

# model.fit(...) # You would then train your model with data

Dropout

This is one of the most effective and popular regularization techniques for neural networks.

- How it works: During training, at each step, a random subset of neurons (usually 20-50%) is "dropped out" or temporarily removed from the network. This means their outputs are ignored, and they don't participate in forward or backward propagation for that step.

- Effect: It prevents neurons from co-adapting too much. Each neuron must learn to be useful on its own, which makes the network more robust and less likely to rely on any single neuron or small group of neurons. It's like training an ensemble of different sub-networks.

Python Implementation (using TensorFlow/Keras):

# Create a model with Dropout layers

model = models.Sequential([

layers.Dense(64, activation='relu'),

layers.Dropout(0.5), # Drop 50% of neurons during training

layers.Dense(64, activation='relu'),

layers.Dropout(0.5),

layers.Dense(1)

])

model.compile(optimizer='adam', loss='mse')

# model.fit(...)

Important: Dropout is typically only used during training. During evaluation or prediction, all neurons are used, but their outputs are often scaled down by the dropout rate to maintain a consistent expected value.

Data Augmentation

This is a form of regularization specific to image, audio, and text data.

- How it works: You artificially increase the size and diversity of your training data by creating modified versions of your existing data.

- Examples for Images:

- Rotating, flipping, or shifting an image.

- Zooming in or out.

- Changing brightness or contrast.

- Effect: The model sees many variations of the same object and learns to recognize its core features regardless of its orientation, lighting, or position. This makes it much more robust.

Python Implementation (using TensorFlow/Keras):

from tensorflow.keras.preprocessing.image import ImageDataGenerator

# Create an ImageDataGenerator for data augmentation

datagen = ImageDataGenerator(

rotation_range=20,

width_shift_range=0.2,

height_shift_range=0.2,

horizontal_flip=True)

# This generator can then be used in model.fit(), providing augmented

# data on the fly during training.

# model.fit(datagen.flow(X_train, y_train, batch_size=32), ...)

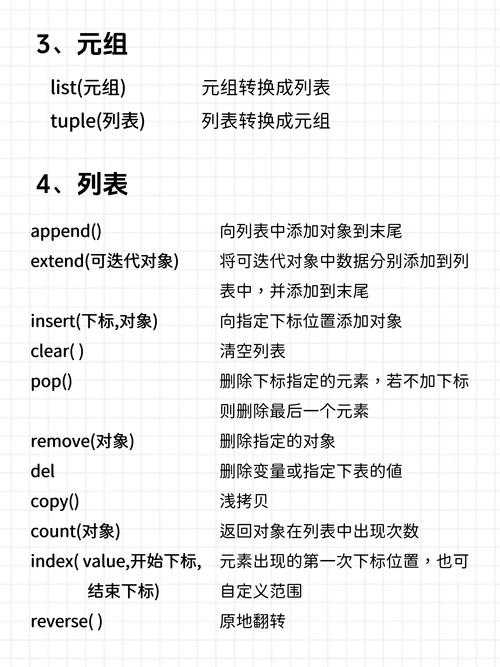

Summary Table

| Technique | How it Works | Best For | Python scikit-learn |

|---|---|---|---|

| L1 (Lasso) | Adds penalty based on absolute value of coefficients. | Feature selection, sparse models. | Lasso(alpha=...) |

| L2 (Ridge) | Adds penalty based on squared value of coefficients. | Handling correlated features, preventing large weights. | Ridge(alpha=...) |

| Elastic Net | Combines L1 and L2 penalties. | Large feature sets with potential correlations. | ElasticNet(alpha=..., l1_ratio=...) |

| Dropout | Randomly deactivates neurons during training. | Almost all neural networks. | layers.Dropout(rate=...) |

| Data Augmentation | Creates new training samples by modifying existing data. | Image, audio, and text data. | ImageDataGenerator (Keras) |

To "regularize" in Python is to apply these techniques to build models that are simpler, more robust, and better at generalizing to new, unseen data.