Of course! The phrase import web in Python is a great question because it touches on a common point of confusion.

The short answer is: There is no standard, built-in Python module named web.

The web is a vast topic, and Python handles it in many different ways through various third-party libraries. The import web statement will only work if you have a specific, non-standard library installed that provides a module named web.

Let's break down the most common things people are looking for when they try to import web.

The Most Likely Candidate: The web.py Framework

If you're trying to build a website or a web API, you've likely heard of the famous, minimalist web framework web.py.

In this case, the import statement is slightly different: import web, but it's not a built-in module. You must first install it.

What is web.py?

A simple, powerful, and easy-to-learn web framework. It's great for small projects, APIs, and learning the fundamentals of web development with Python.

How to Use web.py

Step 1: Installation

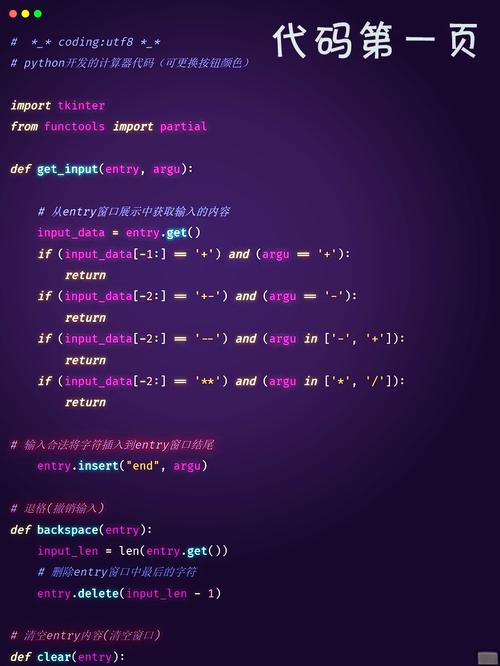

You need to install it using pip:

pip install web.py

Step 2: A Simple "Hello, World!" Example Here is a basic application that runs a web server and prints "Hello, world!" when you visit the root URL.

# Import the web module from the web.py library

import web

# Define the URLs and the functions that handle them

# This maps the URL '/' to the 'index' function

urls = (

'/', 'index'

)

# Create a web application object

app = web.application(urls, globals())

# Define the class for our index page

class index:

def GET(self):

# This function is called when a GET request is made to '/'

return "Hello, world!"

# This is the standard way to run the application

if __name__ == "__main__":

app.run()

Step 3: Run the Application

Save the code above as a file (e.g., myapp.py) and run it from your terminal:

python myapp.py

You will see output like this:

http://0.0.0.0:8080/Now, open your web browser and go to http://127.0.0.1:8080. You will see "Hello, world!".

Other Common Web-Related Imports

People often want to do things like make web requests, parse HTML, or run a server. Here are the standard ways to do those things.

Making HTTP Requests (Calling a Web API)

To get data from another website or API, the most popular library is requests. It's much more user-friendly than Python's built-in urllib.

Installation:

pip install requests

Example:

import requests

# Make a GET request to the JSONPlaceholder API

response = requests.get('https://jsonplaceholder.typicode.com/todos/1')

# Check if the request was successful (status code 200)

if response.status_code == 200:

# The response content is in JSON format, so we parse it

data = response.json()

print(f"User ID: {data['userId']}")

print(f"Title: {data['title']}")

print(f"Completed: {data['completed']}")

else:

print(f"Error: {response.status_code}")

# Output:

# User ID: 1 delectus aut autem

# Completed: False

Creating a Simple Web Server (For Testing or Local Files)

If you just want to quickly share files from a directory or test a static site, Python has a built-in HTTP server.

You don't even need to write a Python script for this. Just navigate to the directory you want to share in your terminal and run:

# For Python 3 python -m http.server # For Python 2 python -m SimpleHTTPServer

This will start a server on http://localhost:8000 that you can access in your browser.

If you wanted to do this programmatically, you would use the http.server module:

# Import the necessary modules from the standard library

from http.server import HTTPServer, SimpleHTTPRequestHandler

# Define the port and address

PORT = 8000

ADDRESS = "localhost"

# Create the server object

# It will serve files from the current directory

server = HTTPServer((ADDRESS, PORT), SimpleHTTPRequestHandler)

print(f"Server started at http://{ADDRESS}:{PORT}")

print("Serving files from the current directory...")

# Run the server forever

try:

server.serve_forever()

except KeyboardInterrupt:

print("\nServer stopped.")

server.server_close()

Parsing HTML (Web Scraping)

To read and extract data from HTML pages, the go-to library is BeautifulSoup4. It works with a parser like lxml or html.parser.

Installation:

pip install beautifulsoup4 pip install lxml # A fast and efficient parser

Example:

import requests

from bs4 import BeautifulSoup

# Fetch the HTML content of a webpage

url = 'http://quotes.toscrape.com/'

response = requests.get(url)

# Create a BeautifulSoup object to parse the HTML

soup = BeautifulSoup(response.text, 'lxml')

# Find all the quote containers

quotes = soup.find_all('div', class_='quote')

print("--- Found Quotes ---")

for quote in quotes:

# Extract the text from the 'span' with class 'text'

text = quote.find('span', class_='text').get_text(strip=True)

# Extract the author's name

author = quote.find('small', class_='author').get_text(strip=True)

print(f'"{text}" - {author}')

Summary Table

| Goal | What to import |

Is it Built-in? | How to Install |

|---|---|---|---|

| Build a Web App/API (Minimalist) | import web |

No | pip install web.py |

| Make HTTP Requests (Get data from API) | import requests |

No | pip install requests |

| Serve Files Locally | from http.server import HTTPServer |

Yes | No installation needed |

| Parse/Scrape HTML | from bs4 import BeautifulSoup |

No | pip install beautifulsoup4 |

Conclusion:

When you see import web, it's almost always referring to the web.py framework. If you're just starting with web development in Python, web.py is a great choice for its simplicity. For more complex applications, you might look into larger frameworks like Flask or Django.