Of course! Here is a comprehensive guide to using the Kinect with Python, covering the most popular and effective methods.

Introduction: Why Python for Kinect?

The official Kinect SDK from Microsoft is written in C++ and has a primary .NET (C#) wrapper. While powerful, it can be complex for Python developers. The Python community has created excellent libraries that act as "wrappers" around the official SDK, making it much easier to use.

Here are the main approaches, from most recommended to more niche:

Method 1: pykinect2 (Recommended for V2)

This is the most popular and well-maintained library for the Kinect for Windows v2. It's a Python wrapper for the official Microsoft SDK, giving you access to all the sensor's capabilities.

Prerequisites

- Hardware: A Kinect for Windows v2 sensor. The original Kinect for Xbox 360 is not supported by this library.

- Python: Python 3.6 or newer (32-bit or 64-bit). It's crucial to match the Python architecture (32/64-bit) with the installed Kinect SDK.

- Kinect SDK: You must install the official Microsoft Kinect for Windows SDK v2.

- Download it from the Microsoft Archive.

- Run the installer. This installs the necessary C++ libraries and drivers.

- Library: Install the

pykinect2library using pip.

Installation

First, ensure you have installed the official Kinect SDK v2. Then, install the Python library:

pip install pykinect2

Important: If you are using a 64-bit version of Python, you should also install the 32-bit version. The Kinect SDK has components for both, and pykinect2 needs the 32-bit version to run correctly.

# Example for 64-bit Python users on Windows pip install pykinect2‑2.0.0‑cp38‑cp38‑win32.whl # Download the .whl file from PyPI first

(Note: The exact wheel filename will vary based on your Python version)

Basic Example: Color, Depth, and Body Tracking

This script will open a window and display the color, depth, and body index frames in real-time.

import cv2

import numpy as np

from pykinect2 import PyKinectV2, PyKinectRuntime

from pykinect2.PyKinectV2 import CFrameDescription

# --- Initialization ---

# Create a runtime instance. This will start the Kinect and track frames.

kinect = PyKinectRuntime.PyKinectRuntime(PyKinectV2.FrameSourceTypes_Color | PyKinectV2.FrameSourceTypes_Depth | PyKinectV2.FrameSourceTypes_BodyIndex)

# Get frame descriptions for depth and color

depth_width, depth_height = kinect.depth_frame_desc.Width, kinect.depth_frame_desc.Height

color_width, color_height = kinect.color_frame_desc.Width, kinect.color_frame_desc.Height

print("Kinect initialized. Press ESC to exit.")

# --- Main Loop ---

try:

while True:

# --- Get Frames ---

# Check if a new color frame is available

if kinect.has_new_color_frame():

color_frame = kinect.get_last_color_frame()

color_frame = color_frame.reshape((color_height, color_width, 4)) # BGRA format

# Convert to BGR for OpenCV

color_frame_bgr = color_frame[:, :, :3]

# Check if a new depth frame is available

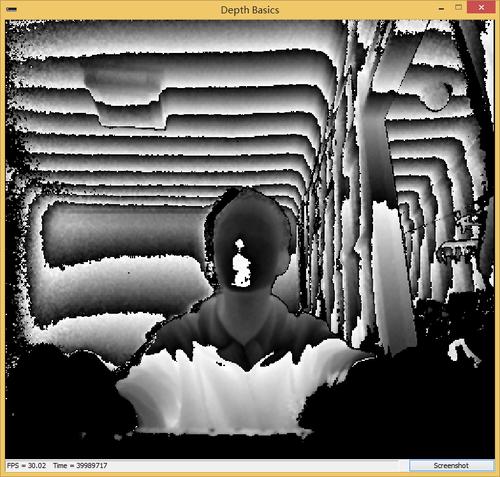

if kinect.has_new_depth_frame():

depth_frame = kinect.get_last_depth_frame()

# The depth frame is 16-bit. We convert it to 8-bit for display.

# We use a fixed scale factor to map the 13-bit depth (0..8191) to 0-255.

depth_frame = (depth_frame.astype(np.float32) * 255.0 / 8191.0).astype(np.uint8)

depth_frame = cv2.applyColorMap(depth_frame, cv2.COLORMAP_JET)

# Check if a new body index frame is available

if kinect.has_new_body_index_frame():

body_index_frame = kinect.get_last_body_index_frame()

# Body index frame is a single-channel 8-bit image.

# We convert it to BGR to overlay it.

body_index_frame_bgr = cv2.cvtColor(body_index_frame, cv2.COLOR_GRAY2BGR)

# --- Process and Display ---

# Display the frames

if 'color_frame_bgr' in locals():

cv2.imshow('Color Feed', color_frame_bgr)

if 'depth_frame' in locals():

cv2.imshow('Depth Feed', depth_frame)

if 'body_index_frame_bgr' in locals():

cv2.imshow('Body Index Feed', body_index_frame_bgr)

# Exit on ESC key press

if cv2.waitKey(1) == 27: # 27 is the ASCII code for ESC

break

finally:

# --- Cleanup ---

kinect.close()

cv2.destroyAllWindows()

print("Kinect closed.")

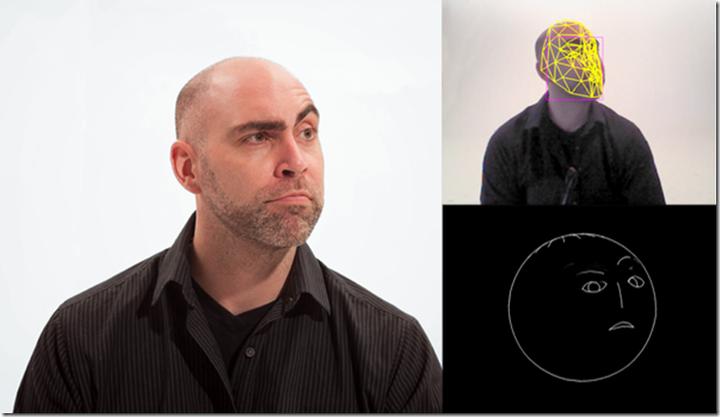

Getting Body Skeleton Data

The real power of the Kinect is its ability to track skeletons. Here's how to access joint data.

# ... (Initialization part is the same as above) ...

# --- Main Loop ---

try:

while True:

# --- Get Frames and Body Data ---

bodies = None

if kinect.has_new_body_frame():

bodies = kinect.get_last_body_frame()

# --- Process and Display ---

if bodies is not None:

for i in range(0, kinect.max_body_count):

body = bodies.bodies[i]

if body.is_tracked:

# Draw the skeleton

# The draw_body method is a helper from pykinect2

# You need a color frame to draw on

if kinect.has_new_color_frame():

color_frame = kinect.get_last_color_frame()

color_frame = color_frame.reshape((color_height, color_width, 4))

color_frame_bgr = color_frame[:, :, :3]

# Draw the skeleton

PyKinectV2.PyKinectV2._dlib_draw_body(color_frame_bgr, body, PyKinectV2.JointType_SpineBase, (0, 255, 0))

cv2.imshow('Body Tracking', color_frame_bgr)

# Exit on ESC key press

if cv2.waitKey(1) == 27:

break

finally:

# --- Cleanup ---

kinect.close()

cv2.destroyAllWindows()

(Note: The _dlib_draw_body function is an internal helper. A more robust approach is to manually iterate through joints and draw lines between them, which gives you more control.)

Method 2: open3d (For 3D Point Clouds)

If your primary goal is to work with 3D data, especially creating point clouds, the open3d library is an excellent choice. It can connect to the Kinect (via pykinect2 or other backends) and has fantastic 3D visualization tools.

Installation

You need open3d and a backend to get the data. pykinect2 is a great backend.

pip install open3d pip install pykinect2

Example: Creating and Visualizing a 3D Point Cloud

This example uses pykinect2 to get the depth and color frames and then open3d to fuse them into a 3D point cloud.

import open3d as o3d

import numpy as np

from pykinect2 import PyKinectRuntime, PyKinectV2

# --- Initialization ---

kinect = PyKinectRuntime.PyKinectRuntime(PyKinectV2.FrameSourceTypes_Depth | PyKinectV2.FrameSourceTypes_Color)

# Get camera intrinsics for the Kinect v2

# These values are standard for the Kinect v2 sensor

depth_intrinsics = o3d.camera.PinholeCameraIntrinsic(

kinect.depth_frame_desc.Width,

kinect.depth_frame_desc.Height,

525.0, # fx

525.0, # fy

kinect.depth_frame_desc.Width / 2.0, # cx

kinect.depth_frame_desc.Height / 2.0 # cy

)

print("Visualizing point cloud. Press 'q' to exit the viewer.")

# --- Main Loop ---

try:

while True:

if kinect.has_new_depth_frame() and kinect.has_new_color_frame():

# Get frames

depth_frame = kinect.get_last_depth_frame()

color_frame = kinect.get_last_color_frame()

# Prepare data for Open3D

# Depth is a 16-bit array, color is BGRA

depth = depth_frame.astype(np.float32)

color = color_frame.reshape((kinect.color_frame_desc.Height, kinect.color_frame_desc.Width, 4))[:, :, :3]

# Create an Open3D image and point cloud

color_image = o3d.geometry.Image(color)

depth_image = o3d.geometry.Image(depth)

# Create the point cloud

pcd = o3d.geometry.PointCloud.create_from_depth_image_and_camera_intrinsic(

depth_image, depth_intrinsics, color_image, depth_scale=1000.0) # Kinect depth is in mm

# Visualize

o3d.visualization.draw_geometries([pcd], window_name="Kinect Point Cloud", width=640, height=480)

finally:

# --- Cleanup ---

kinect.close()

print("Kinect closed.")

Note: The o3d.visualization.draw_geometries function is blocking, meaning it will pause your script until you close the window. For a real-time application, you would need to manage the viewer in a non-blocking way, which is more advanced.

Method 3: libfreenect2 (Alternative for V2)

libfreenect2 is a powerful, community-driven open-source driver for the Kinect v2. It's not a wrapper for the Microsoft SDK but its own implementation. It's known for high performance and is cross-platform (Windows, Linux, macOS).

Installation

Installation is more involved and depends on your OS. You need to compile the library from source or find pre-compiled binaries.

- General Steps:

- Install required system dependencies (e.g.,

libusb,glfw3,OpenGL). - Download the

libfreenect2source. - Build the library using

cmake. - Install the Python wrapper,

python-freenect2:pip install python-freenect2

- Install required system dependencies (e.g.,

Basic Example

The API is different from pykinect2.

import freenect2

import freenect2.freenect2 as Freenect2

import freenect2.sync as sync

import cv2

import numpy as np

fn = Freenect2()

devices = fn.enumerate_devices()

if len(devices) == 0:

print("No Kinect found!")

exit(1)

pipeline = fn.open_device(devices[0])

# Create listeners for frames

# The 'listener' gets frames in a synchronized way

listener = fn.FrameSyncDepthColorPipeline(pipeline)

print("Press ESC to exit.")

try:

while True:

# Get synchronized depth and color frames

frames = listener.get_frames()

depth_frame = frames[0].as_array()

color_frame = frames[1].as_array()

# Convert color from BGRA to BGR

color_frame_bgr = cv2.cvtColor(color_frame, cv2.COLOR_BGRA2BGR)

# Convert depth for display (similar to pykinect2 example)

depth_display = (depth_frame.astype(np.float32) * 255.0 / 4500.0).astype(np.uint8)

depth_display = cv2.applyColorMap(depth_display, cv2.COLORMAP_JET)

# Display

cv2.imshow('Color Feed', color_frame_bgr)

cv2.imshow('Depth Feed', depth_display)

if cv2.waitKey(1) == 27:

break

finally:

# --- Cleanup ---

listener.stop()

pipeline.close()

cv2.destroyAllWindows()

print("Kinect closed.")

Summary and Comparison

| Feature | pykinect2 |

open3d |

libfreenect2 |

|---|---|---|---|

| Ease of Use | High. Simple wrapper for official SDK. | Medium. Excellent for 3D, but API is different. | Medium. Powerful but requires more setup. |

| Features | Excellent. Full access to Color, Depth, IR, Body, Audio. | Good for 3D. Point clouds, registration, visualization. | Excellent. High-performance access to Color, Depth, IR. |

| Platform | Windows only (relies on MS SDK). | Cross-platform (relies on Open3D's backends). | Cross-platform (Windows, Linux, macOS). |

| Best For | General purpose, beginners, body tracking, Windows apps. | 3D reconstruction, point cloud visualization, research. | High-performance applications, Linux/macOS users. |

| Dependencies | Official MS Kinect SDK v2. | Open3D, NumPy, (and optionally a backend like pykinect2). |

libfreenect2 C++ library, OpenGL, GLFW, etc. |

Recommendation

For most users starting out on Windows, pykinect2 is the best place to start. It's the most straightforward path to getting all the Kinect's data, including the powerful body tracking, with minimal fuss.

If your main goal is 3D visualization and point cloud processing, then use open3d with pykinect2 as the data source.

If you are on Linux or macOS, or need the absolute highest performance, libfreenect2 is your only viable option among these three.