Of course! GridSearchCV is a fundamental and powerful tool in machine learning for hyperparameter tuning. Let's break it down from a high-level concept to a practical Python example.

What is GridSearchCV?

At its core, GridSearchCV is a technique to find the best combination of hyperparameters for a machine learning model.

Analogy: Baking a Cake

- Model: The recipe for a cake (e.g., a vanilla cake).

- Hyperparameters: The variables you can adjust in the recipe.

baking_temperature: 180°C vs. 200°Camount_of_sugar: 100g vs. 150gmixing_time: 5 mins vs. 10 mins

- Goal: Find the combination of temperature, sugar, and mixing time that produces the best-tasting cake (i.e., the most accurate model).

GridSearchCV automates this "trial-and-error" process. It tries every possible combination of the hyperparameters you specify, evaluates each combination using cross-validation, and then tells you which combination performed the best.

Key Concepts Explained

-

Hyperparameters: These are the "settings" or "knobs" of a model that you, the data scientist, set before the training process begins. They are not learned from the data.

(图片来源网络,侵删)

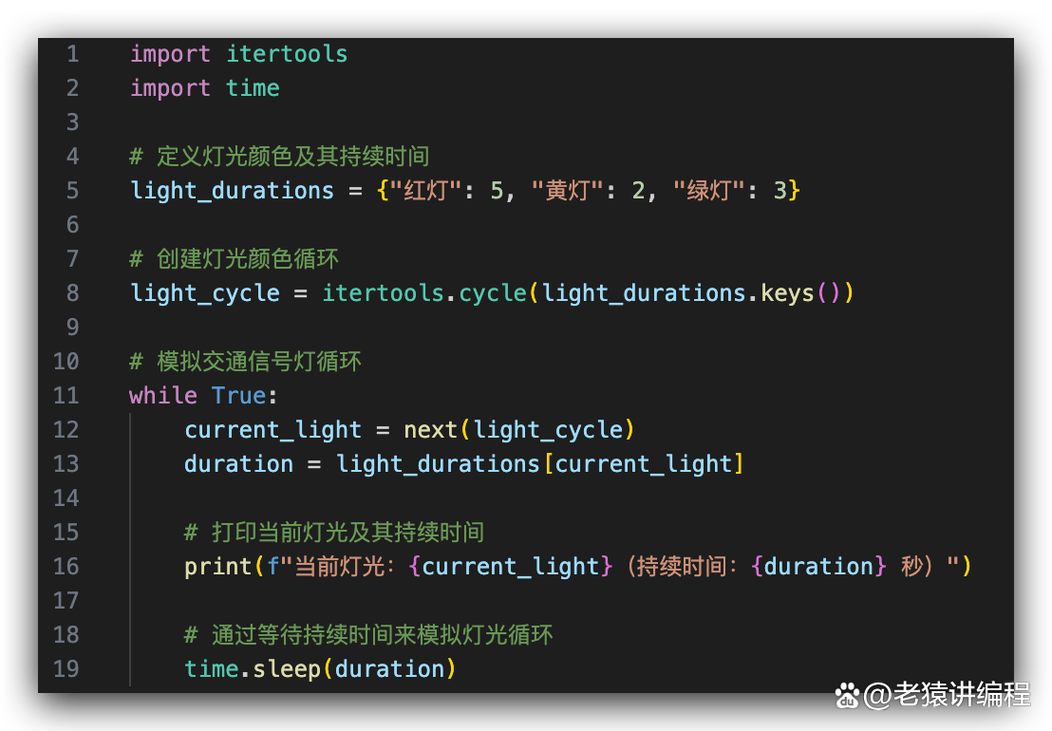

(图片来源网络,侵删)- Examples:

n_estimatorsin a Random Forest,Candkernelin an SVM,learning_ratein a neural network. - Crucial Point: Choosing good hyperparameters is critical for a model's performance. A poorly tuned model, even with a great algorithm, will perform badly.

- Examples:

-

Grid: This refers to the "grid" of all possible hyperparameter combinations you want to test. If you have 2 hyperparameters, and you test 3 values for the first and 2 for the second, you have a 3x2 grid, for a total of 6 combinations.

-

CV (Cross-Validation): This is the "C" in

GridSearchCV. It's the method used to evaluate each combination of hyperparameters fairly. The most common type is K-Fold Cross-Validation.- How it works (K=5):

- The training data is split into 5 "folds" (parts).

- The model is trained 5 times. In each run, 4 folds are used for training and 1 fold is used for validation.

- The performance score (e.g., accuracy) from the 5 validation runs is averaged.

- Why use it? It gives a more robust and reliable estimate of the model's performance on unseen data than a simple train/test split, which can be highly dependent on how the data is split.

- How it works (K=5):

How to Use GridSearchCV in Python (with Scikit-learn)

Here is a step-by-step practical guide.

Step 1: Import Necessary Libraries

import pandas as pd import numpy as np from sklearn.model_selection import train_test_split, GridSearchCV from sklearn.ensemble import RandomForestClassifier from sklearn.svm import SVC from sklearn.metrics import classification_report, accuracy_score

Step 2: Load and Prepare Data

We'll use the famous Iris dataset, which is conveniently built into scikit-learn.

# Load the dataset from sklearn.datasets import load_iris iris = load_iris() X = iris.data y = iris.target # Split the data into training and testing sets # The test set is held out until the very end to evaluate the final model X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42, stratify=y)

Step 3: Define the Model and the Hyperparameter Grid

Let's tune a RandomForestClassifier. We need to define two things:

- The model object we want to tune.

- A dictionary (

param_grid) where keys are the hyperparameter names and values are the lists of values to test.

# 1. Create the model

# We start with a basic model instance. The specific hyperparameters don't matter here,

# as GridSearchCV will override them.

rf = RandomForestClassifier(random_state=42)

# 2. Define the parameter grid

# We'll tune 'n_estimators' and 'max_depth'

param_grid = {

'n_estimators': [50, 100, 200], # Number of trees in the forest

'max_depth': [None, 10, 20, 30], # Maximum depth of the tree

'min_samples_split': [2, 5, 10] # Minimum number of samples required to split a node

}

Step 4: Create and Run the GridSearchCV Object

This is where the magic happens. We'll create a GridSearchCV object and "fit" it to our training data.

# Create the GridSearchCV object

# estimator: The model to tune (our RandomForestClassifier)

# param_grid: The dictionary of parameters to search

# cv: The number of cross-validation folds (e.g., 5)

# scoring: The metric to optimize for. 'accuracy' is common for classification.

# n_jobs: -1 means use all available CPU cores to speed up the process.

# verbose: Controls the verbosity of the output. Higher numbers give more details.

grid_search = GridSearchCV(

estimator=rf,

param_grid=param_grid,

cv=5,

scoring='accuracy',

n_jobs=-1,

verbose=2

)

# Fit the grid search to the data

# This will train the model for every single combination of parameters

print("Starting Grid Search...")

grid_search.fit(X_train, y_train)

print("Grid Search Complete!")

Step 5: Analyze the Results

After fit() is done, the grid_search object contains all the information about the search.

# 1. Get the best parameters found

print("Best Parameters found: ", grid_search.best_params_)

# 2. Get the best score achieved with those parameters

# This is the average cross-validation score of the best model.

print("Best Cross-validation Accuracy: ", grid_search.best_score_)

# 3. Get the best estimator (the model already re-trained on the full training data

# with the best parameters)

best_rf = grid_search.best_estimator_

print("\nBest Estimator:\n", best_rf)

Step 6: Evaluate the Final Model on the Test Set

This is the most important step. We use the unseen test set to get a final, unbiased evaluation of our tuned model.

# Make predictions on the test set using the best model

y_pred = best_rf.predict(X_test)

# Evaluate the model

print("\n--- Evaluation on Test Set ---")

print("Accuracy on Test Set: ", accuracy_score(y_test, y_pred))

print("\nClassification Report:\n", classification_report(y_test, y_pred, target_names=iris.target_names))

Complete Code Example

import pandas as pd

import numpy as np

from sklearn.model_selection import train_test_split, GridSearchCV

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report, accuracy_score

# 1. Load and Prepare Data

from sklearn.datasets import load_iris

iris = load_iris()

X = iris.data

y = iris.target

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42, stratify=y)

# 2. Define Model and Hyperparameter Grid

rf = RandomForestClassifier(random_state=42)

param_grid = {

'n_estimators': [50, 100, 200],

'max_depth': [None, 10, 20, 30],

'min_samples_split': [2, 5, 10]

}

# 3. Create and Run GridSearchCV

grid_search = GridSearchCV(

estimator=rf,

param_grid=param_grid,

cv=5,

scoring='accuracy',

n_jobs=-1,

verbose=2

)

print("Starting Grid Search...")

grid_search.fit(X_train, y_train)

print("Grid Search Complete!")

# 4. Analyze Results

print("\n--- Grid Search Results ---")

print("Best Parameters found: ", grid_search.best_params_)

print("Best Cross-validation Accuracy: ", grid_search.best_score_)

best_rf = grid_search.best_estimator_

# 5. Evaluate Final Model on Test Set

y_pred = best_rf.predict(X_test)

print("\n--- Evaluation on Test Set ---")

print("Accuracy on Test Set: ", accuracy_score(y_test, y_pred))

print("\nClassification Report:\n", classification_report(y_test, y_pred, target_names=iris.target_names))

Alternatives to GridSearchCV

While GridSearchCV is excellent, it has a major drawback: it can be very slow because it tries every single combination.

-

RandomizedSearchCV- How it works: Instead of trying every combination, it samples a fixed number of combinations from the parameter distributions. You specify

n_iter(e.g., 20) to control how many random combinations to try. - Pros: Much faster, especially when the parameter grid is large. Often finds very good parameters without exhaustive search.

- Cons: Not guaranteed to find the absolute best combination.

- How it works: Instead of trying every combination, it samples a fixed number of combinations from the parameter distributions. You specify

-

HalvingGridSearchCVandHalvingRandomSearchCV(Successive Halving)- How it works: These are more advanced methods. They start by evaluating all candidates with a small amount of resources (e.g., few CV folds or few training samples). They then "promote" the best-performing candidates to the next round, where they are evaluated with more resources. This process continues until only one winner remains.

- Pros: Dramatically faster than

GridSearchCVas it quickly discards poor-performing parameter combinations. - Cons: Can be more complex to understand.

Summary: GridSearchCV vs. RandomizedSearchCV

| Feature | GridSearchCV |

RandomizedSearchCV |

|---|---|---|

| Search Strategy | Exhaustive (tests all combinations) | Random sampling (tests a fixed number of combinations) |

| When to Use | When the parameter grid is small. | When the parameter grid is large or continuous. |

| Speed | Slow, especially with many parameters. | Much faster, as n_iter is usually small. |

| Guarantee | Guaranteed to find the best combination. | Not guaranteed to find the best, but often finds a very good one. |

| Best For | Small, discrete parameter grids. | Large grids, continuous parameters, or when time is limited. |