Of course! The term spidermain isn't a standard Python library or command, but it's clearly a portmanteau of "Spider" (referring to a web crawler or scraper) and "main" (the standard entry point for a Python script).

It's a great name for a main file that orchestrates a web scraping project. Let's break down what a spidermain.py file would typically do and how to structure it.

What is a spidermain.py?

It's the central controller or the "brain" of your web scraping project. Its main responsibilities are:

- Configuration: Define settings like which URLs to scrape, how many pages to get, and where to save the data.

- Orchestration: Start the scraping process, manage the workflow, and handle the scraped data.

- Execution: Be the entry point you run from your terminal (

python spidermain.py).

Structure of a spidermain.py Project

A well-structured scraping project usually has more than one file. Here's a common and scalable layout:

my_scraper_project/

├── spidermain.py # The main entry point and orchestrator

├── spiders/

│ └── my_spider.py # The actual scraping logic for a specific site

├── items.py # Defines the structure of the data you're scraping

└── utils.py # Helper functions (e.g., saving data, cleaning text)Example: A Complete spidermain.py Project

Let's build a simple scraper to extract quotes from http://quotes.toscrape.com/. We'll use the popular requests and BeautifulSoup4 libraries.

Step 1: Project Setup

First, install the necessary libraries:

pip install requests beautifulsoup4

Step 2: Create the File Structure

Create the directory and files as shown above.

my_scraper_project/

├── spidermain.py

├── spiders/

│ └── quotes_spider.py

├── items.py

└── utils.pyStep 3: Define the Data Structure (items.py)

This file defines a "blueprint" for each piece of data we scrape. It helps keep our data consistent.

# items.py

from dataclasses import dataclass

@dataclass

class QuoteItem:

"""A simple data class to hold scraped quote information."""

text: str

author: str

tags: list[str]

Step 4: Create the Scraper Logic (spiders/quotes_spider.py)

This file contains the core logic for finding and extracting data from the web page.

# spiders/quotes_spider.py

import requests

from bs4 import BeautifulSoup

from typing import List

from ..items import QuoteItem # Import the data structure

class QuotesSpider:

def __init__(self, base_url: str):

self.base_url = base_url

self.session = requests.Session() # Use a session for connection pooling

def parse(self, url: str) -> List[QuoteItem]:

"""

Fetches a page and extracts all quotes.

"""

print(f"Scraping {url}...")

try:

response = self.session.get(url, timeout=10)

response.raise_for_status() # Raise an exception for bad status codes (4xx or 5xx)

except requests.exceptions.RequestException as e:

print(f"Error fetching {url}: {e}")

return []

soup = BeautifulSoup(response.text, 'html.parser')

quotes = []

# Find all quote containers on the page

for quote_div in soup.find_all('div', class_='quote'):

text = quote_div.find('span', class_='text').get_text(strip=True)

author = quote_div.find('small', class_='author').get_text(strip=True)

tags = [tag.get_text(strip=True) for tag in quote_div.find_all('a', class_='tag')]

# Create an item instance

quote_item = QuoteItem(text=text, author=author, tags=tags)

quotes.append(quote_item)

return quotes

def get_next_page_url(self, soup: BeautifulSoup) -> str | None:

"""

Finds the URL for the next page.

"""

next_button = soup.find('li', class_='next')

if next_button:

return self.base_url + next_button.find('a')['href']

return None

Step 5: Create Utility Functions (utils.py)

This file will handle saving the scraped data to a file.

# utils.py

import json

from typing import List

from .items import QuoteItem

def save_to_json(data: List[QuoteItem], filename: str = 'quotes.json'):

"""

Saves a list of QuoteItem objects to a JSON file.

"""

# Convert dataclass objects to dictionaries for JSON serialization

json_data = [item.__dict__ for item in data]

with open(filename, 'w', encoding='utf-8') as f:

json.dump(json_data, f, ensure_ascii=False, indent=4)

print(f"Successfully saved {len(json_data)} quotes to {filename}")

Step 6: The Main Orchestrator (spidermain.py)

This is the star of the show. It ties everything together.

# spidermain.py

import time

from spiders.quotes_spider import QuotesSpider

from utils import save_to_json

from items import QuoteItem

def main():

"""

Main function to orchestrate the web scraping process.

"""

# --- Configuration ---

BASE_URL = "http://quotes.toscrape.com"

OUTPUT_FILE = 'scraped_quotes.json'

MAX_PAGES = 5 # Limit the number of pages to scrape for this example

# --- Initialization ---

spider = QuotesSpider(base_url=BASE_URL)

all_quotes: list[QuoteItem] = []

current_url = BASE_URL

pages_scraped = 0

# --- Execution Loop ---

while current_url and pages_scraped < MAX_PAGES:

# 1. Fetch and parse the current page

quotes_on_page = spider.parse(current_url)

if quotes_on_page:

all_quotes.extend(quotes_on_page)

print(f"Found {len(quotes_on_page)} quotes on this page.")

# 2. Get the URL for the next page

# We need to fetch the page again to find the "next" button,

# or we could have returned it from the parse method.

try:

response = spider.session.get(current_url)

soup = BeautifulSoup(response.text, 'html.parser')

current_url = spider.get_next_page_url(soup)

except requests.exceptions.RequestException:

current_url = None # Stop if there's an error

pages_scraped += 1

time.sleep(1) # Be a good web citizen: don't send too many requests too quickly

# --- Finalization ---

print("\nScraping finished.")

if all_quotes:

save_to_json(all_quotes, OUTPUT_FILE)

else:

print("No quotes were scraped.")

if __name__ == "__main__":

main()

Step 7: Run the Scraper

Navigate to your project's root directory in the terminal and run:

python spidermain.py

You will see output in the console as it scrapes each page, and finally, a scraped_quotes.json file will be created in your directory with all the collected quotes.

Key Concepts in spidermain.py

-

if __name__ == "__main__":: This is the standard Python idiom. The code inside this block only runs when the script is executed directly, not when it's imported as a module. This makes your code reusable. -

Configuration at the Top: All the important settings (URLs, filenames, limits) are defined at the beginning of the

main()function. This makes it easy to change them without digging through the code. -

Separation of Concerns:

spidermain.pydoesn't know how to parse HTML. It just tells theQuotesSpiderto "parse this URL."quotes_spider.pydoesn't know what to do with the data. It just returns a list ofQuoteItemobjects.utils.pyhandles the final data storage, independent of the scraping logic.

-

Robustness: The

try...exceptblocks handle potential network errors gracefully, preventing the script from crashing.

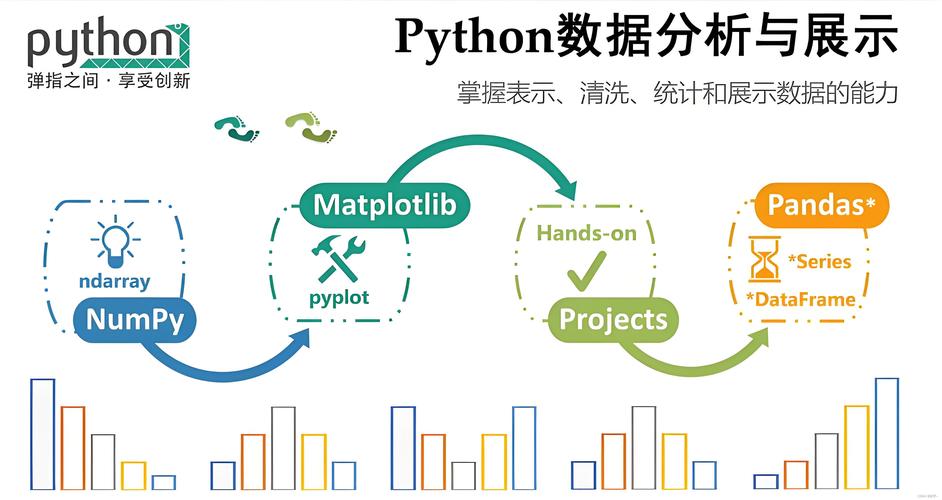

Advanced: Using Scrapy Framework

For larger, more complex projects, you'd use a dedicated framework like Scrapy. Scrapy formalizes the structure we just built-in.

In Scrapy, spidermain.py would be replaced by a command:

scrapy crawl quotes_spider

The spiders are defined in spiders/quotes_spider.py as classes that inherit from scrapy.Spider, and Scrapy provides its own built-in mechanisms for handling items, pipelines (for saving data), and scheduling requests. The core idea of a central controller remains the same, but the framework handles the boilerplate for you.